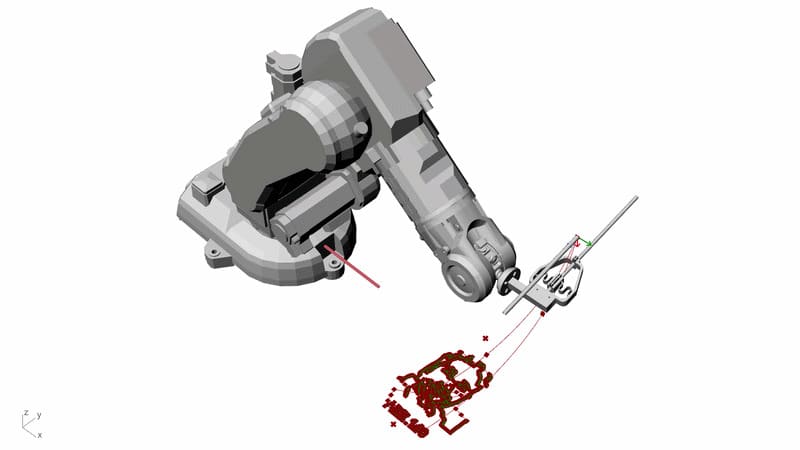

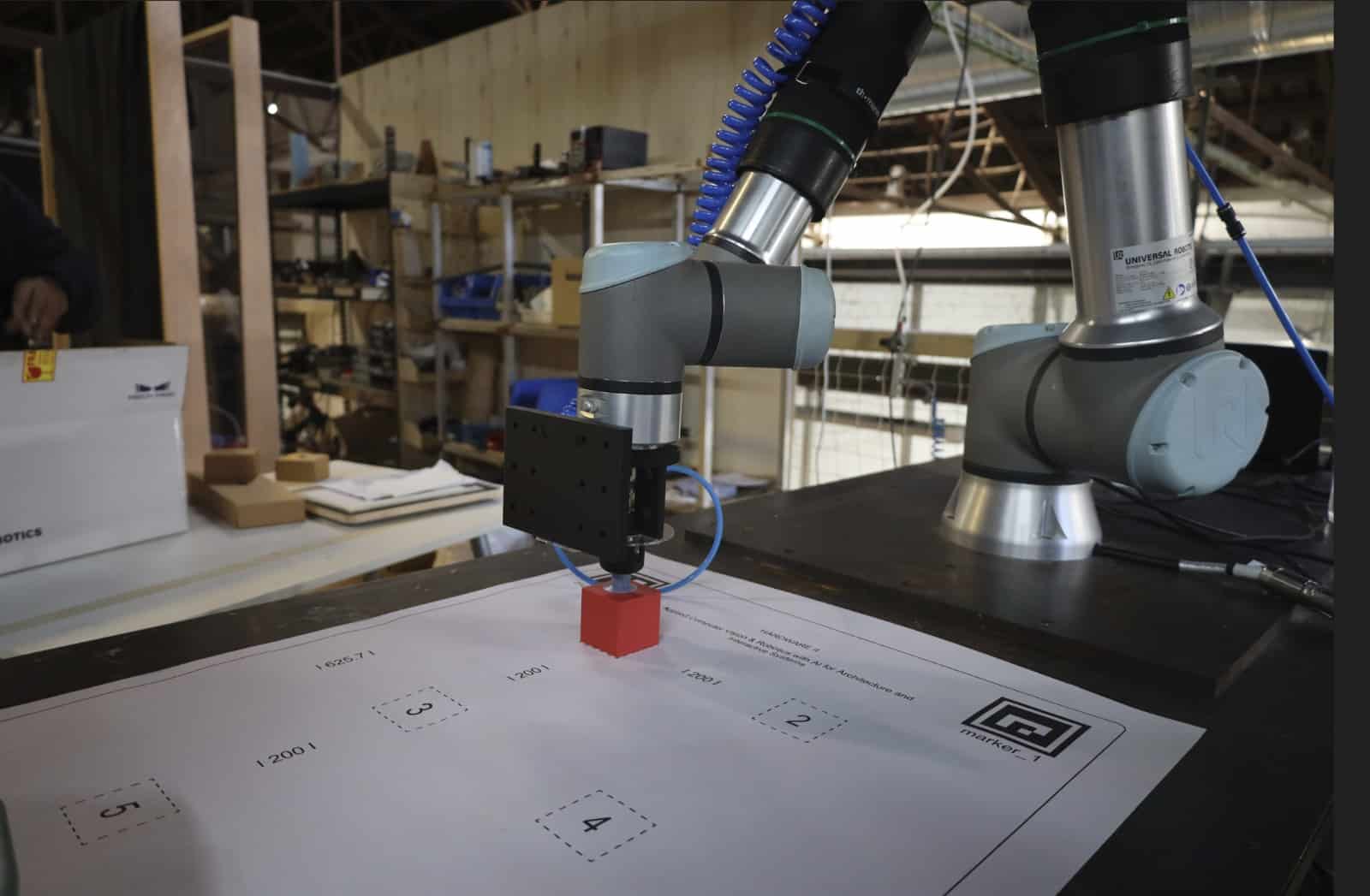

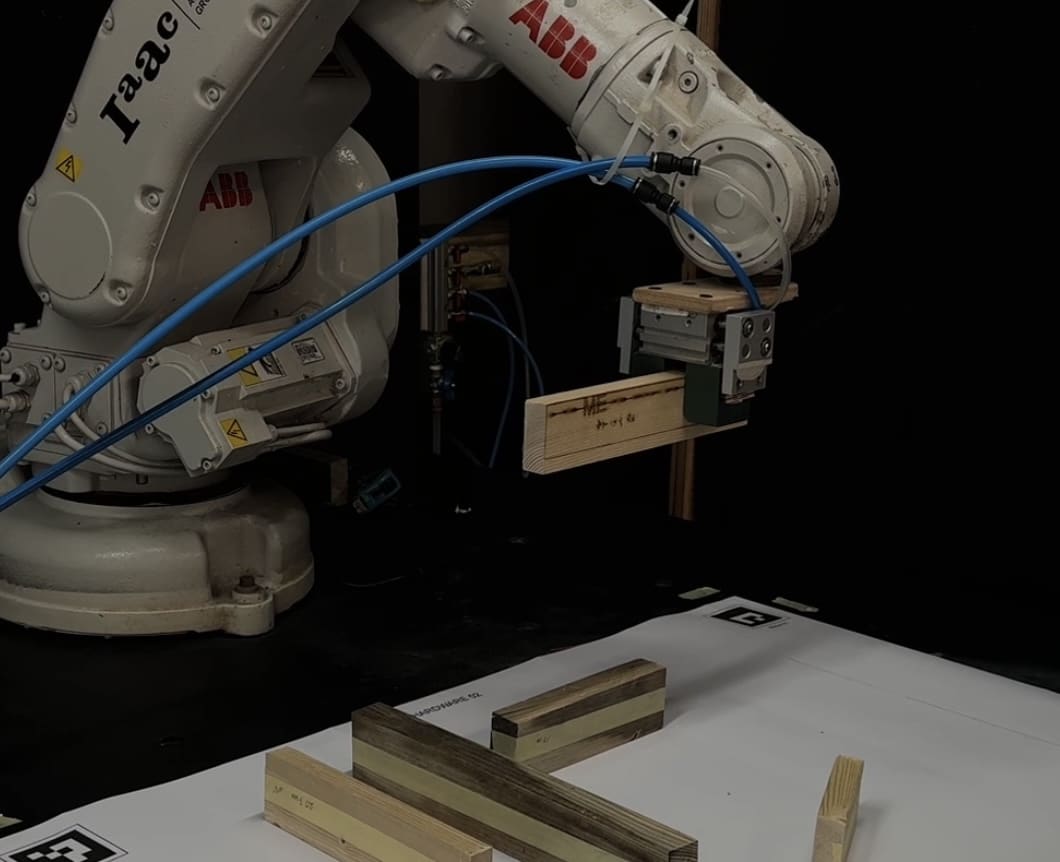

HARDWARE II is an advanced, practice-oriented course focused on applying real-time computer vision and artificial intelligence to architectural, robotic, and interactive systems. The course guides students through the full AI vision pipeline, from ethical data collection and dataset design to model training, optimization, and deployment in physical environments. Using tools such as Roboflow, YOLO, OpenCV, ONNX, and ROS2, students develop autonomous systems capable of perceiving, analyzing, and responding to spatial conditions in real time.

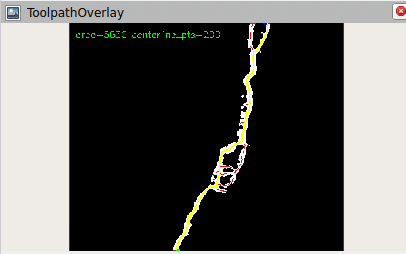

Emphasis is placed on transforming visual information into quantitative spatial intelligence, including distance, speed, density, and occupancy metrics relevant to architecture and robotics. Students critically engage with issues of bias, privacy, and responsibility in AI-driven visual systems, particularly within public and built environments. The course culminates in an independent project where students design and implement a fully integrated AI system connecting perception, decision-making, and physical or interactive actuation.

Learning Objectives

By the end of this course, students will be able to:

- Design and manage high-quality, ethically responsible datasets for computer vision applications

- Train and evaluate real-time object detection models using YOLO

- Implement OpenCV-based spatial analysis, tracking, and geometric measurement

- Convert visual detections into real-world quantitative metrics

- Deploy optimized AI models using ONNX for real-time inference

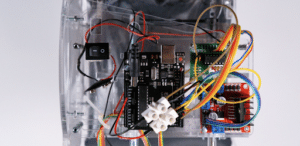

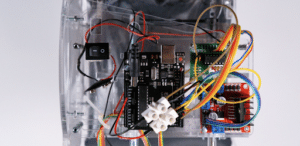

- Integrate AI perception systems with ROS2, robots, Arduino, or interactive architectural interfaces

- Analyze system performance, limitations, and failure cases

- Design and implement an end-to-end autonomous AI system for architectural or robotic applications