Solutions for Rapid Data Acquisition in Disaster Scenarios

This project explores a robotic perception pipeline designed to support post-disaster assessment and early response scenarios. The core objective is to detect and map cracks in damaged buildings using vision-based data, assisting rescue teams by providing spatial information about potentially unsafe areas.

Rather than aiming for full structural validation, the project focuses on rapid damage visualization as a first step toward informed decision-making in hazardous environments where human access is limited.

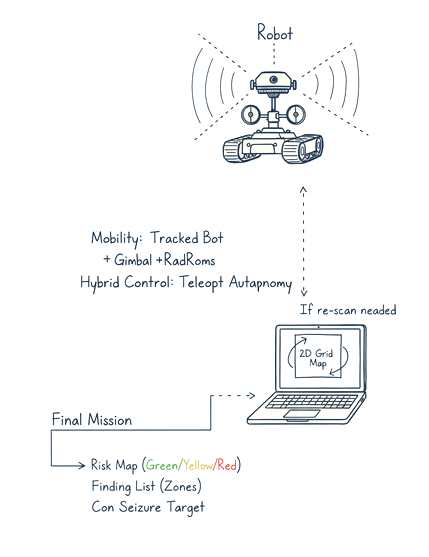

Concept of Operations

The proposed workflow follows a semi-autonomous inspection logic:

- A mobile robotic platform enters a damaged structure.

- Visual data is captured using onboard cameras.

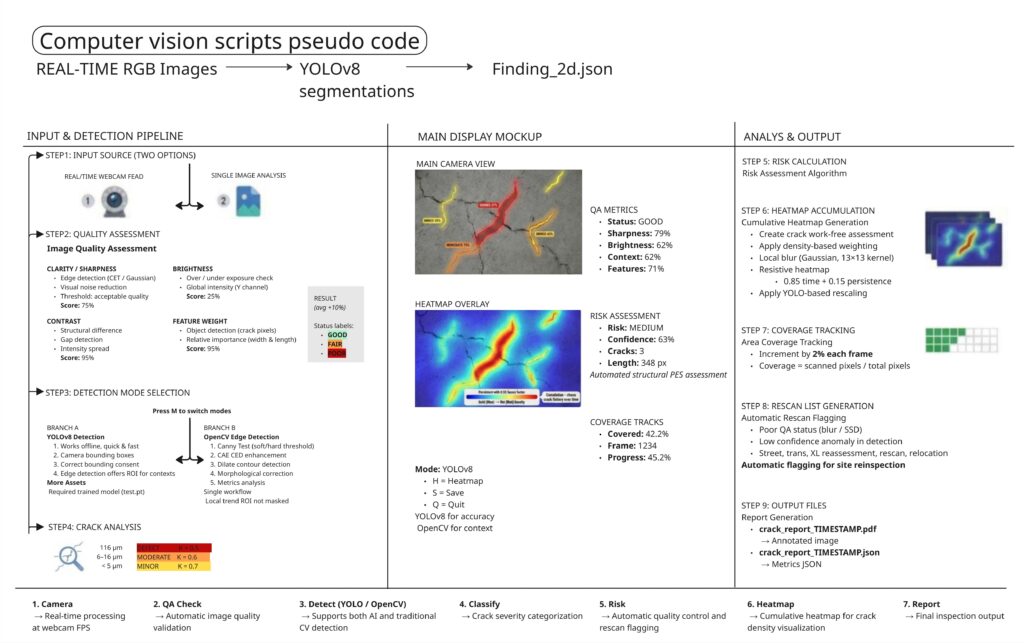

- The data is processed to detect visible cracks on concrete surfaces.

- Crack locations are mapped in 3D space and visualized as a damage map.

- The robot can navigate toward detected cracks and return to a safe position.

The system is conceived as supportive, not decisive — it provides information to human operators rather than making safety judgments autonomously.

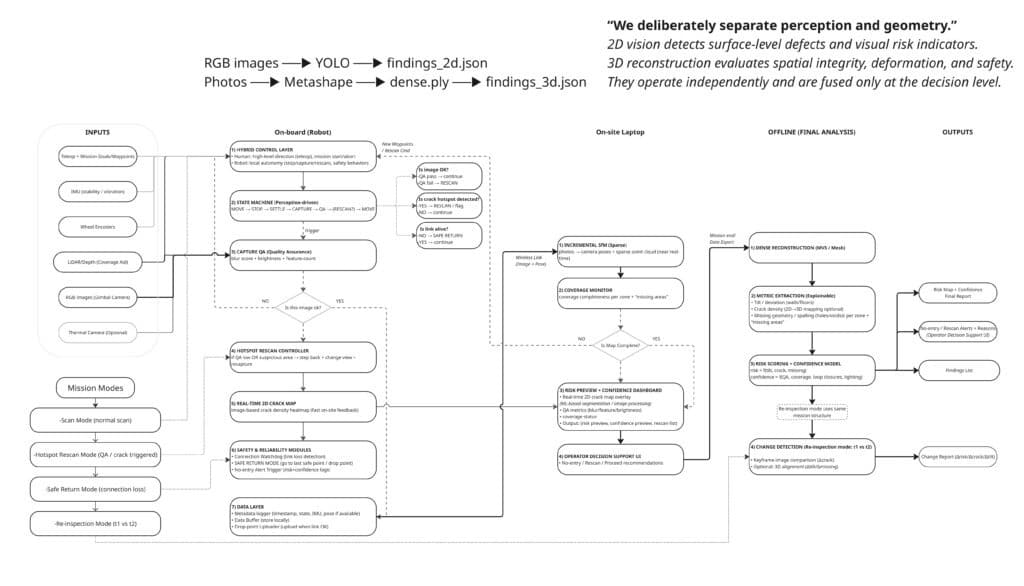

Data Acquisition & Processing Pipeline

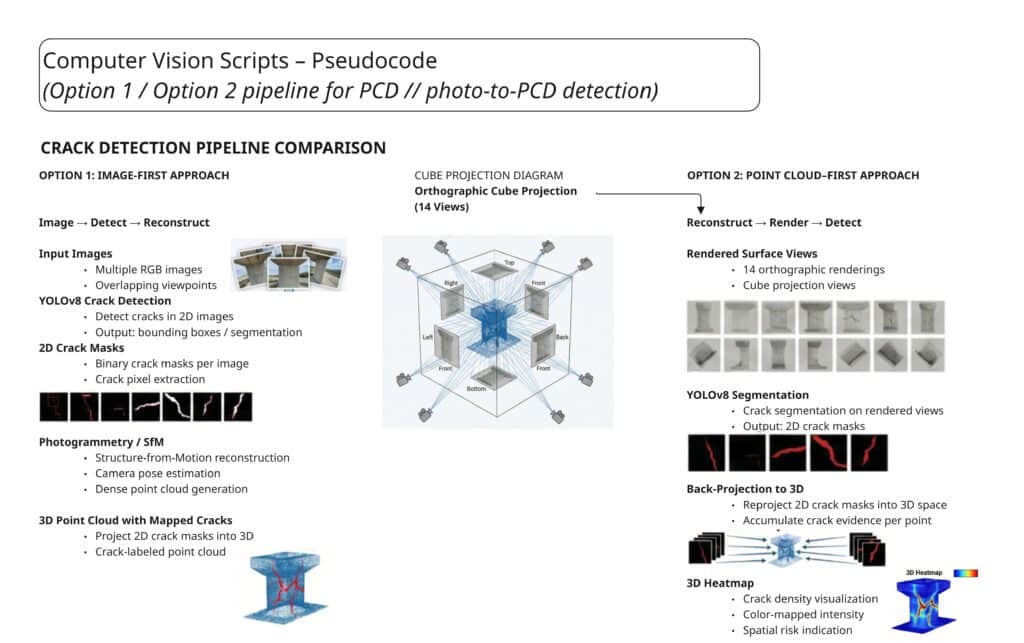

The project primarily uses photogrammetry-derived point clouds rather than LiDAR, due to tool availability and time constraints.

The processing pipeline includes:

- Converting point clouds into textured meshes

- Projecting textures onto a cube to obtain six orthogonal views

- Applying ICP-based reprojection to map detections back onto 3D geometry

- Running a trained YOLOv8 crack detection model on the textured surfaces

- Reprojecting detected cracks onto the original point cloud

This approach proved more reliable than directly processing raw point clouds, even though it introduced additional processing steps.

Crack Detection Strategy

While clustering methods were initially considered due to their speed, they offered limited control over detection accuracy. Instead, trained deep-learning models were selected because they allow:

- Adjustable confidence thresholds

- More precise control over false positives

- Better alignment with the visual nature of cracks

This decision reflects a practical trade-off between computational efficiency and detection reliability.

Key Constraints & Assumptions

Several constraints shaped the project outcome:

- Time limitation: The project was developed within ~40 hours.

- Offline processing: Real-time analysis was not implemented due to computational limits.

- Material assumptions: Cracks were assumed to be in concrete surfaces.

- No structural classification: The system does not distinguish between cosmetic and structural cracks.

- Lighting conditions: Variable lighting was not explicitly modeled, affecting detection robustness.

These constraints were acknowledged as part of the MVP (Minimum Viable Prototype) approach.

Feedback & Critical Reflection

Audience and instructor feedback highlighted several important points:

- First-response systems should prioritize real-time analysis rather than post-processing.

- Crack detection alone does not equate to structural safety assessment.

- Differentiating cosmetic vs. structural cracks remains a major unresolved challenge.

- SLAM data could have been leveraged to estimate crack dimensions and spatial context.

- Sensor fusion (thermal, subsurface scanning, LiDAR) could significantly improve reliability.

This feedback revealed that terminology and framing are as important as technical implementation when presenting emergency-response technologies.

Future Development

Based on feedback and reflection, future iterations could include:

- Integration of SLAM-based spatial scaling

- Use of LiDAR for real-time mapping

- Sensor fusion (thermal imaging, subsurface scanning)

- Material-aware crack classification

- Onboard edge computing for live analysis

- Explicit modeling of lighting conditions

These steps would shift the project closer to a true real-time first-response system.

Conclusion

This project demonstrates a feasible workflow for vision-based crack detection using accessible tools and limited resources. While not intended as a final solution, it serves as a functional proof-of-concept that highlights both the technical possibilities and critical limitations of autonomous damage assessment in disaster scenarios.

The project embraces the reality of rapid prototyping: working with available tools, making assumptions explicit, and iterating based on feedback.