Circular Intelligence for Robotic Classification & Upcycling of Industrial Timber

How can robotic system identify, measure and sort reusable construction materials based on predefined constraints?

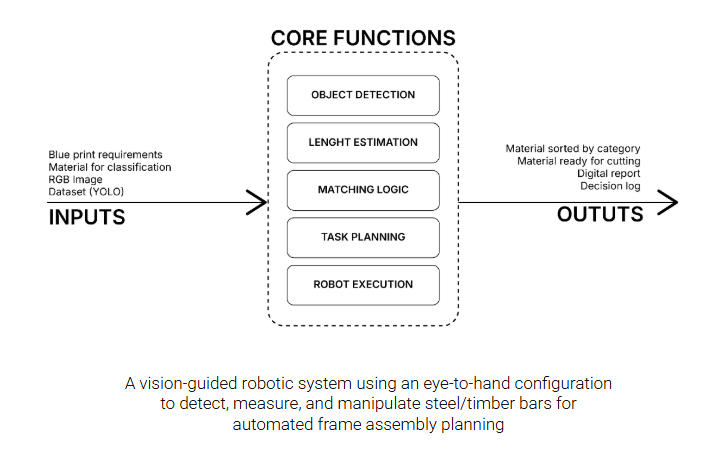

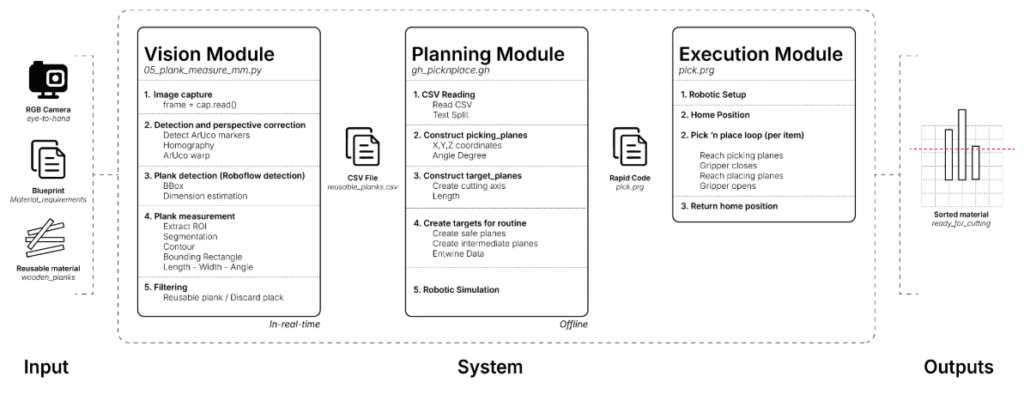

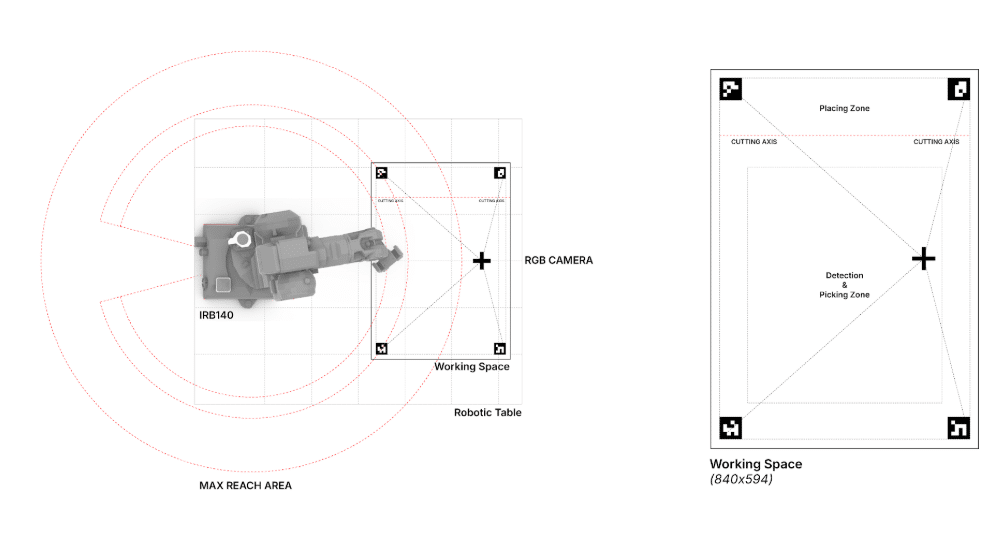

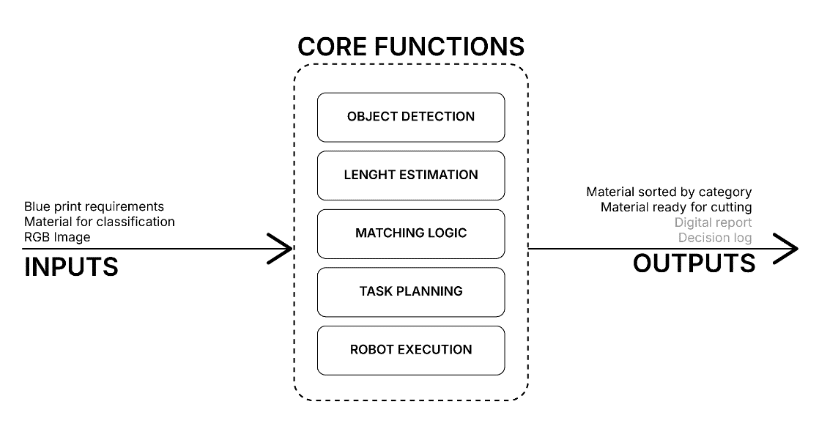

This project proposes a vision-guided robotic system for supporting the reuse of construction materials through automated detection, analysis, and manipulation. A fixed RGB camera in an eye-to-hand configuration observes a workspace containing discarded wooden bars, identifying individual pieces through a custom-trained computer vision model.

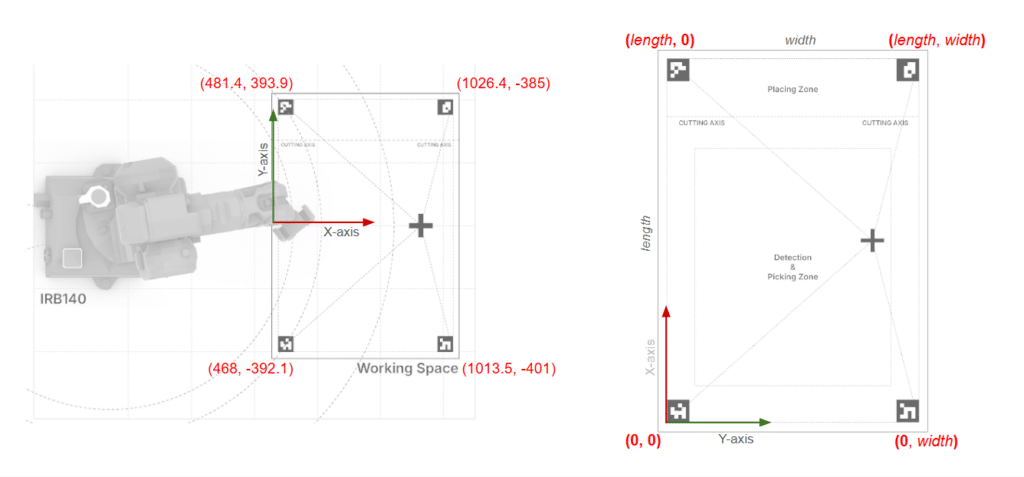

Each detected element is analyzed to estimate its position, orientation, and length. The user inputs the required measurements into the algorithm, which returns the coordinates of the piece that satisfies the specified criteria. This data acquisition process is performed entirely offline — captured via camera and subsequently transferred to Grasshopper, which handles the pick-and-place logic for the selected elements.

A robotic arm then retrieves the qualifying pieces and positions them accordingly, placing them over a cutting line when dimensional adjustment is required prior to reuse.

From Computer Vision to Robotic Motion

VISION MODULE

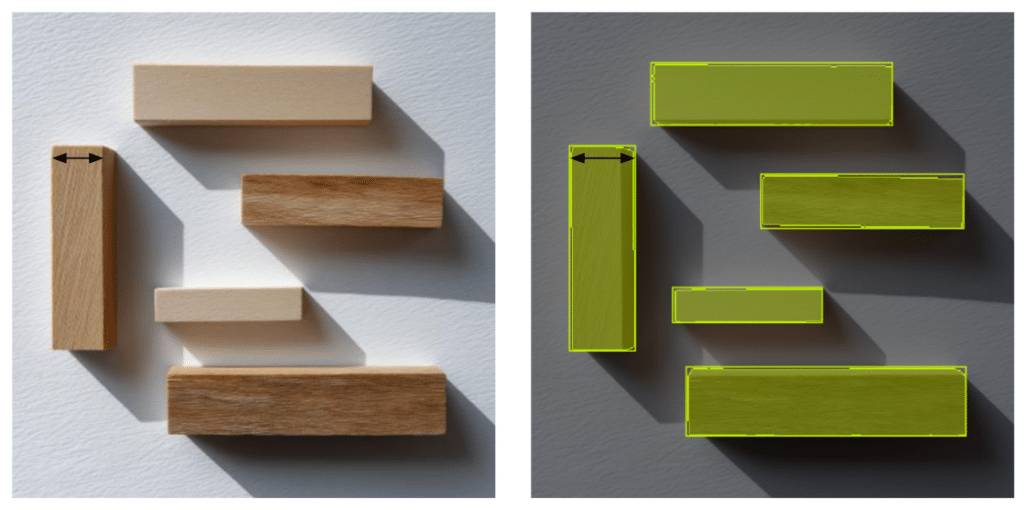

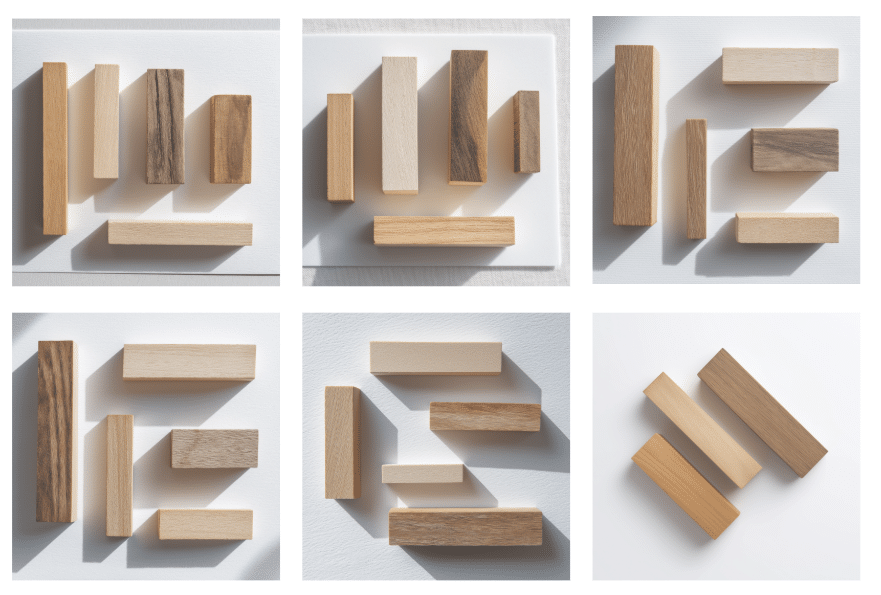

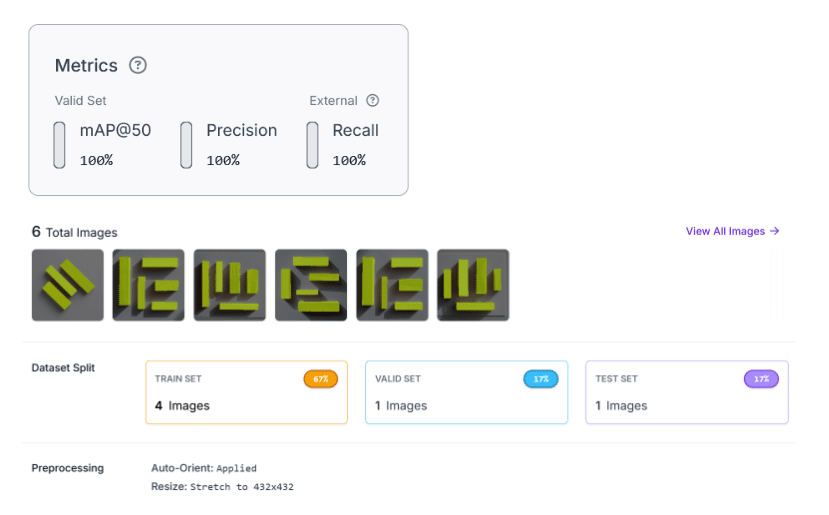

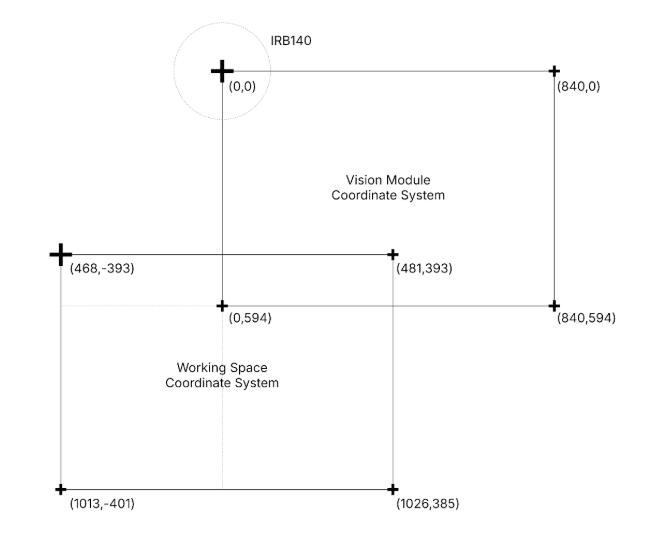

To optimize the detection of wooden components, we implemented a robust pipeline that integrates deep learning with classic computer vision techniques. The instance segmentation model was trained using a streamlined dataset of just six AI-generated images, demonstrating the efficacy of synthetic data for rapid prototyping. Once the objects are identified, we utilize ArUco markers as fiducial references to execute a homographic transformation; this process enables high-precision coordinate mapping from the visual input directly to the robot’s workspace, ensuring a reliable interaction between the perception system and the robotic execution.

In this first stage, a document in csv format is obtained with the information regarding the coordinates (to understand where each of the pieces is located) necessary for the next stage.

PLANNING MODULE

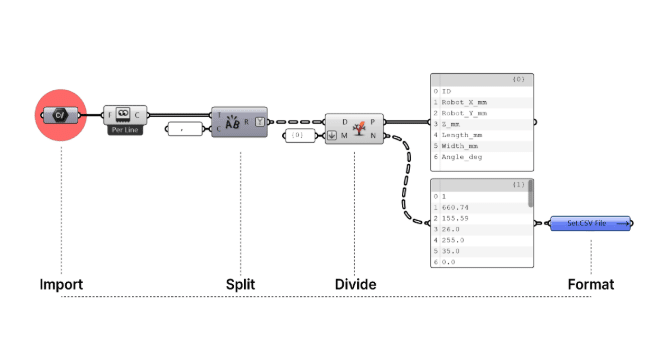

To automate robotic path planning, we implemented a custom Grasshopper script designed to ingest CSV data, extracting essential parameters such as identification, spatial coordinates, and dimensions for each wooden component. This automated workflow executes a rigorous logical sequence: it initiates with the importation and parsing of tabular data, proceeds to the precise construction of picking and placing planes tailored to specific dimensional requirements, and culminates in the generation of safety-conscious trajectories and intermediate waypoints.

By integrating this data with a parametric definition of cutting axes, the system ensures fluid robotic execution, effectively minimizing human error while optimizing precision in material interaction.

Simulation – Generation of pick-and-place trajectories based on processed coordinates:

EXECUTION MODULE

The final phase of the project begins with the physical calibration of the workspace, which is essential to establish an exact correspondence between the virtual and physical environments. By using ArUco markers as fiducial reference points, we perform a comparative routine between the digitally detected coordinates and the actual robot positions.

Once this spatial alignment is validated, we proceed to execute the program generated in the previous phase via our custom Grasshopper script. This process culminates in the robotic arm selecting and orienting the wooden components with millimetric precision into the correct cutting position, thereby materializing the complete integration of visual perception, parametric data processing, and robotic execution.

CONCLUSIONS AND OPPORTUNITIES FOR IMPROVEMENT

The development of this vision and robotic control system successfully integrated visual perception with parametric execution within the Grasshopper environment, achieving precise automation in the manipulation of wooden components. However, when evaluating the results against our initial proposal, certain limitations were identified; specifically, the automatic generation of the Digital Report and the Decision Log were not implemented in this iteration, remaining as key features for future development.

Furthermore, we have identified critical opportunities for optimization. A recurring challenge during implementation was the precision in calibration and spatial referencing between the digital and physical environments. To mitigate these deviations, our primary proposed improvement involves refining the training dataset; by limiting the learning process to the top layer of objects, we can significantly increase accuracy in both dimensional estimation and the actual positioning of the components. This technical evolution will not only bolster the system’s robustness but also facilitate a more seamless transition toward complex construction environments.