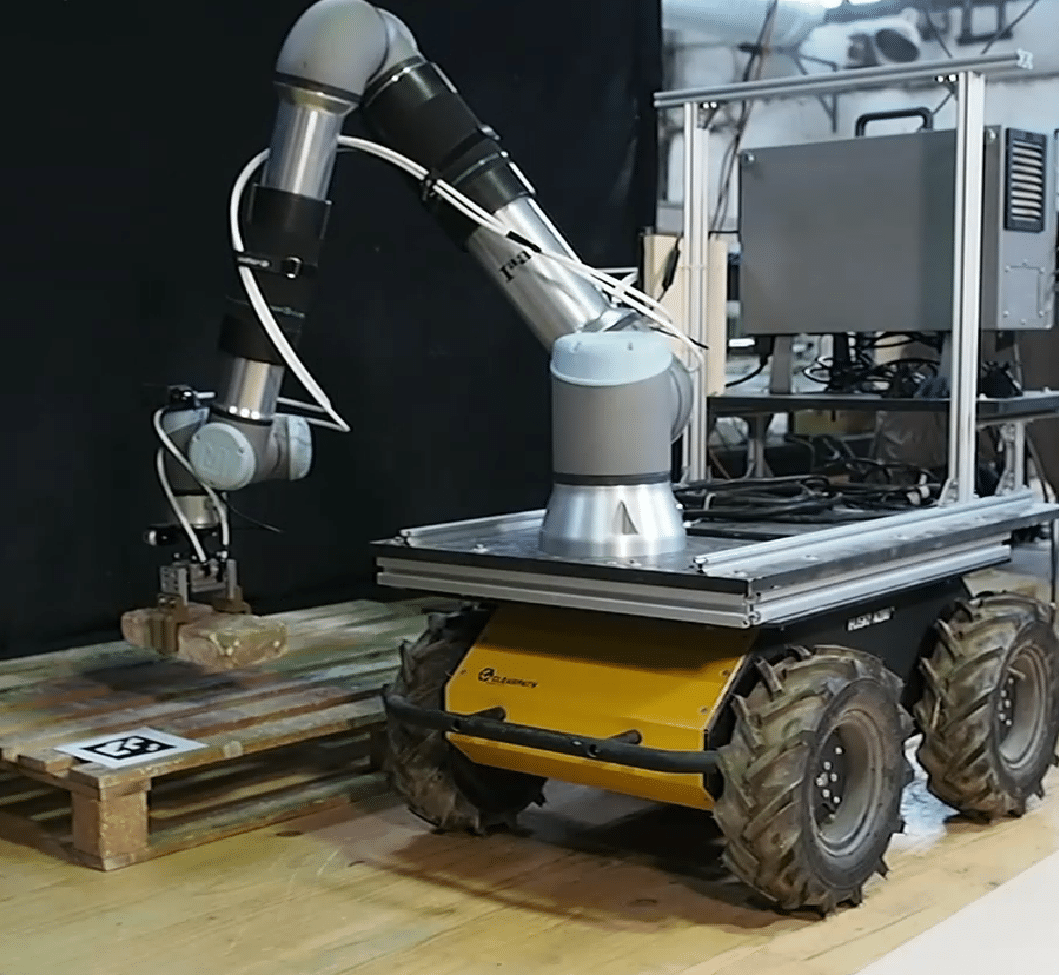

Robotics in the context of construction presents a distinct set of challenges that go beyond classical industrial automation. Unlike structured factory environments, construction sites are large-scale, partially unstructured, and continuously evolving. For mobile robotic systems to operate effectively in such settings, they must be capable of spatial perception and understanding of existing building elements, robust localisation across large workspaces, and uninterrupted task execution despite changes in position and context.

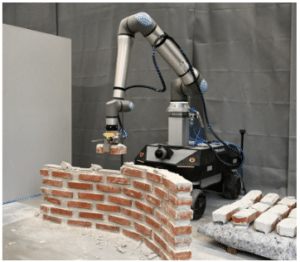

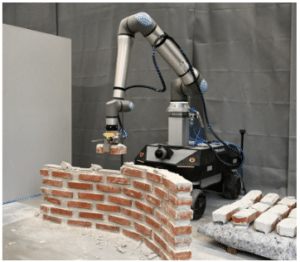

This seminar explores the software and algorithmic foundations that enable such capabilities. Using the robotic disassembly and reassembly of existing brickwork as a guiding case study, we will examine how spatial AI, mobile manipulation, digital twin generation, and AI-supported design can be integrated into a coherent robotic pipeline. The workflow encompasses the autonomous perception of bricks within human-built structures, robotic disassembly from multiple locations, on-the-fly generation of a digital twin, AI-supported design of new assemblies using the reclaimed material, and robotic reassembly, all coordinated throughout via ROS-based systems and interfaced via Rhino/Grasshopper. We further explore how AI is trained and applied within this pipeline. This includes deep learning–based object detection and pose estimation, training strategies using a custom photorealistic synthetic data generator, and the potential of multimodal AI for structured, data-driven design generation.

Learning Objectives

At course completion, the student will be able to:

- Understand the fundamental software and algorithmic components required for mobile robotic operation in construction environments, including perception, localisation, planning, and manipulation.

- Explain how spatial AI and deep learning–based object detection and pose estimation enable robotic understanding of existing human-built structures.

- Understand how photorealistic synthetic data generation can be used to train and evaluate deep learning models for object detection and pose estimation in robotic systems.

- Understand the principles of graph-based modelling for representing assemblies and supporting robotic disassembly and reassembly workflows.

- Understand how multimodal AI systems can be used to generate structured, graph-based design proposals by combining spatial data, material constraints, and high-level design intent.