The global construction industry currently faces a significant sustainability crisis, characterized by the massive generation of material waste that often bypasses recycling streams. This challenge stems primarily from a technological gap: the lack of real-time identification, sorting, and decision-making systems on active demolition sites. Without an efficient way to distinguish between various debris types, valuable resources are frequently lost to landfills.

To bridge this gap, our initiative focuses on developing an automated, AI-powered material recognition and sorting pipeline. By integrating advanced computer vision and machine learning algorithms, this system would enable faster on-site material separation, ensuring that materials like masonry and structural components are accurately identified for salvage. This shift toward automation is more than a technical upgrade; it is a fundamental catalyst for data-driven circular construction workflows. By transforming disorganised rubble into categorised, reusable assets, we can significantly increase reuse and recycling rates. This approach moves the industry away from traditional linear consumption and toward a circular economy, where the life cycle of construction materials is extended, environmental impacts are minimised, and “waste” is redefined as a valuable resource for future builds.

Project Overview

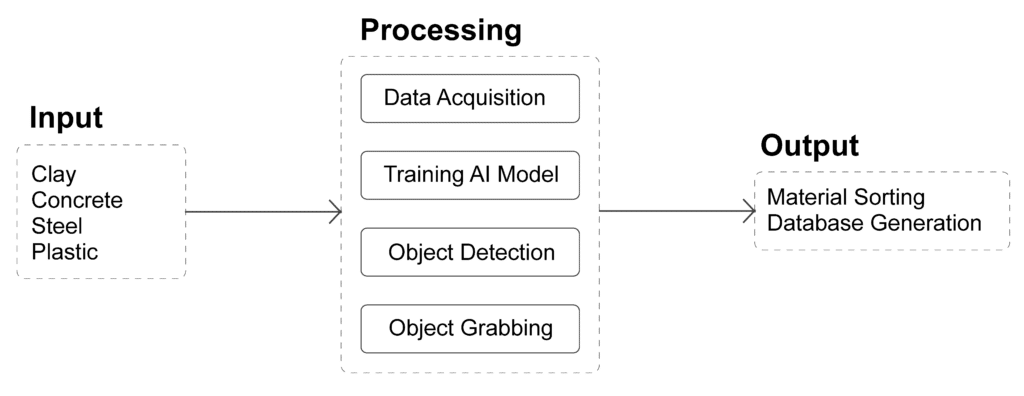

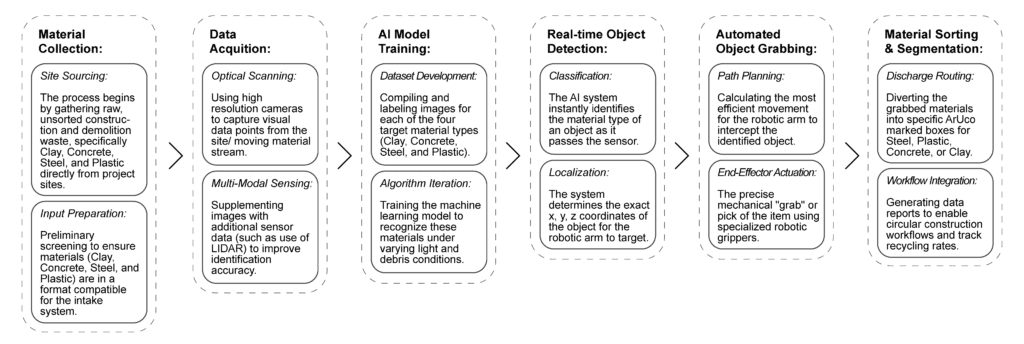

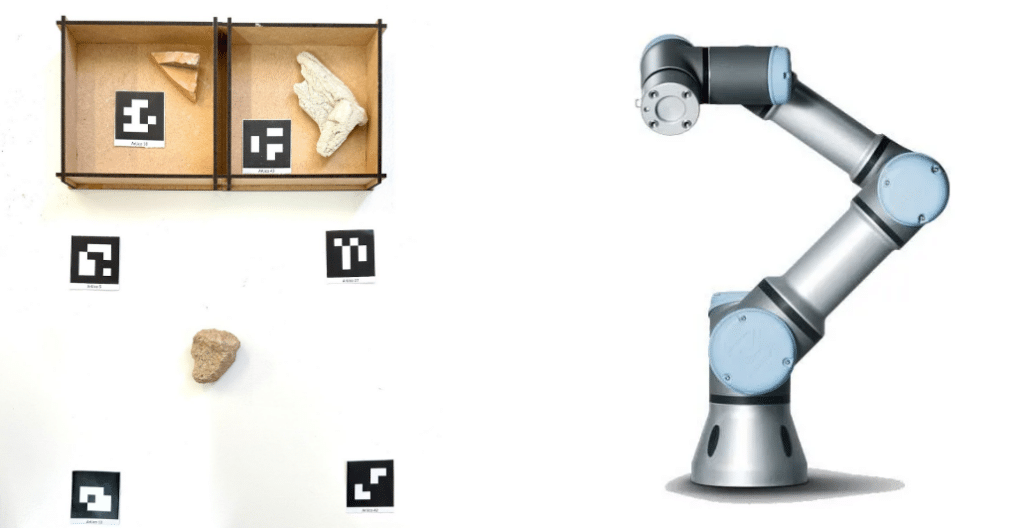

This project proposes an automated, AI-powered material recognition and sorting pipeline to facilitate the circular reuse of construction and demolition debris. An integrated computer vision system monitors a workspace containing mixed waste—specifically clay, concrete, steel, and plastic—identifying individual components through a custom-trained object detection model.

Each detected element is analyzed to determine its material classification and precise spatial orientation. The system processes this visual data through a matching logic that categorizes the debris, ensuring high-purity streams for architectural salvage. This information is then translated into actionable task planning, bridging the gap between digital perception and physical manipulation. A robotic arm subsequently retrieves the categorized pieces and executes precise pick-and-place maneuvers, sorting the materials into designated zones for immediate reintegration into the construction lifecycle.

Workflow

Project Infrastructure

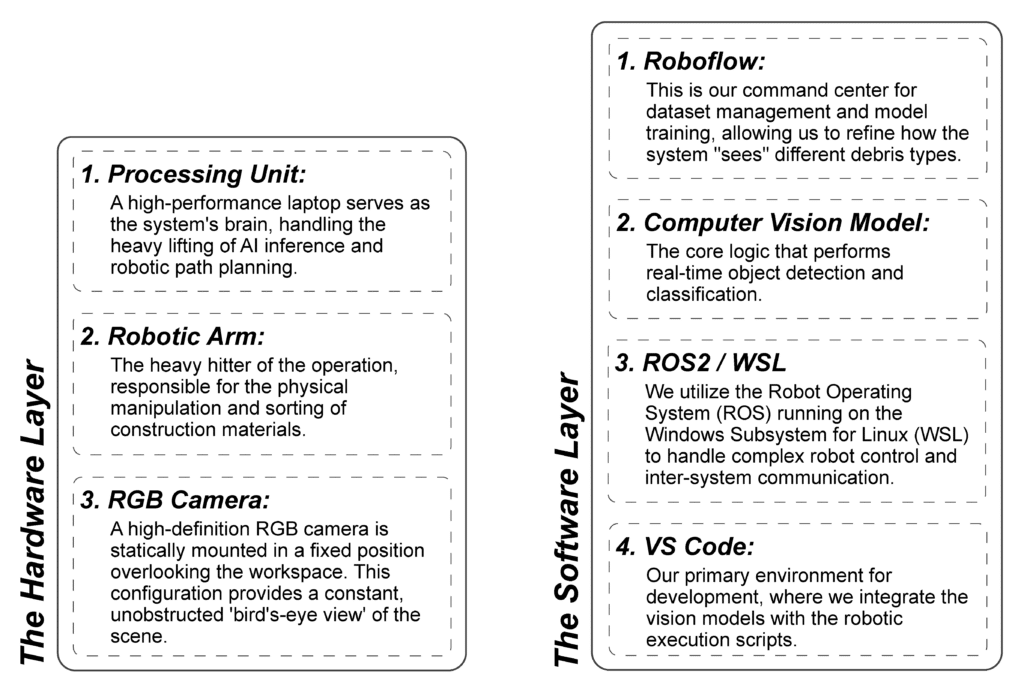

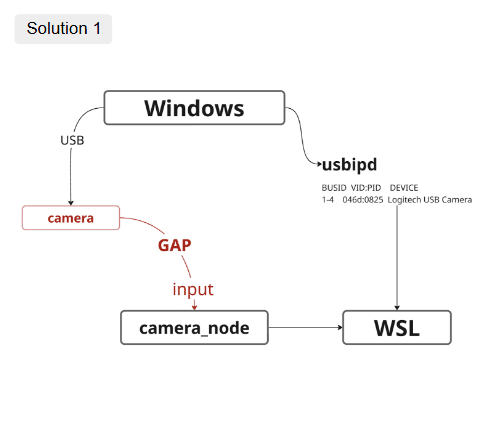

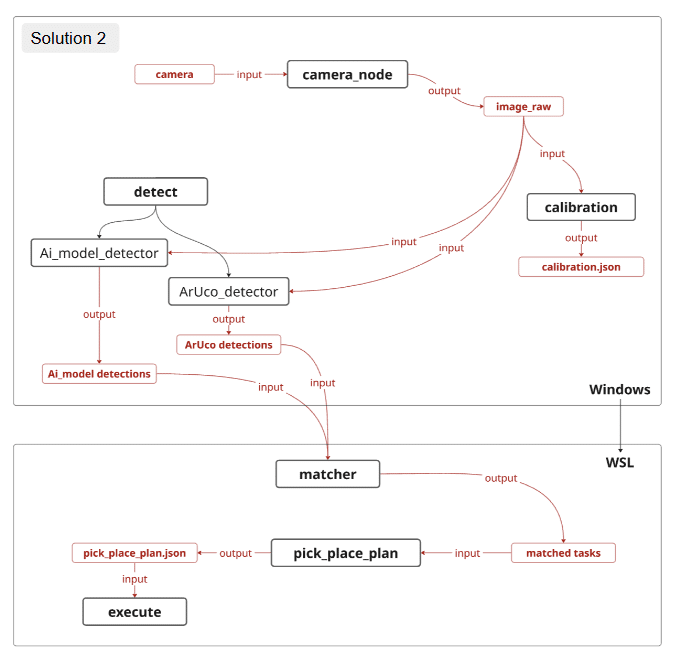

To successfully bridge the gap between AI perception and physical sorting, our project relies on a robust integration of hardware and software. We’ve structured our technical requirements into two primary layers to ensure the system is both powerful enough for real-time processing and flexible enough for iterative development.

AI Model Training

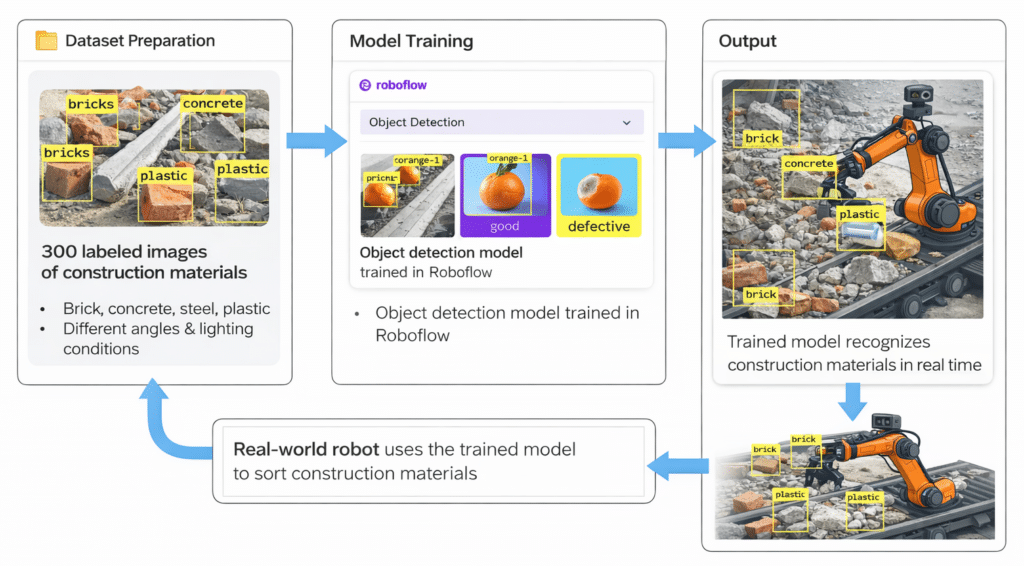

To enable the robotic system to recognize construction materials, we trained a basic AI object detection model. This model helps the system identify different materials from camera images and supports the sorting process.

Dataset Preparation

The first step was preparing a dataset for training the model. We collected 300 labeled images of construction materials. These images were manually annotated so the model could learn where each object appears in the image. To make the dataset more useful, the images included:

- Different camera angles

- Various lighting conditions

- Some partially hidden objects (occlusions)

The dataset contained several material categories, including:

- Brick

- Concrete

- Steel

- Plastic

This variety helps the model recognize materials in different real-world situations.

Model Training

After preparing the dataset, we trained an object detection model using the Roboflow platform. Roboflow allowed us to upload images, label them, and run a basic training process.

The model was trained to balance two things:

- Accuracy – correctly identifying materials

- Speed – running fast enough for real-time detection

Output

The result of this process is a trained model that can recognize construction materials in real time.

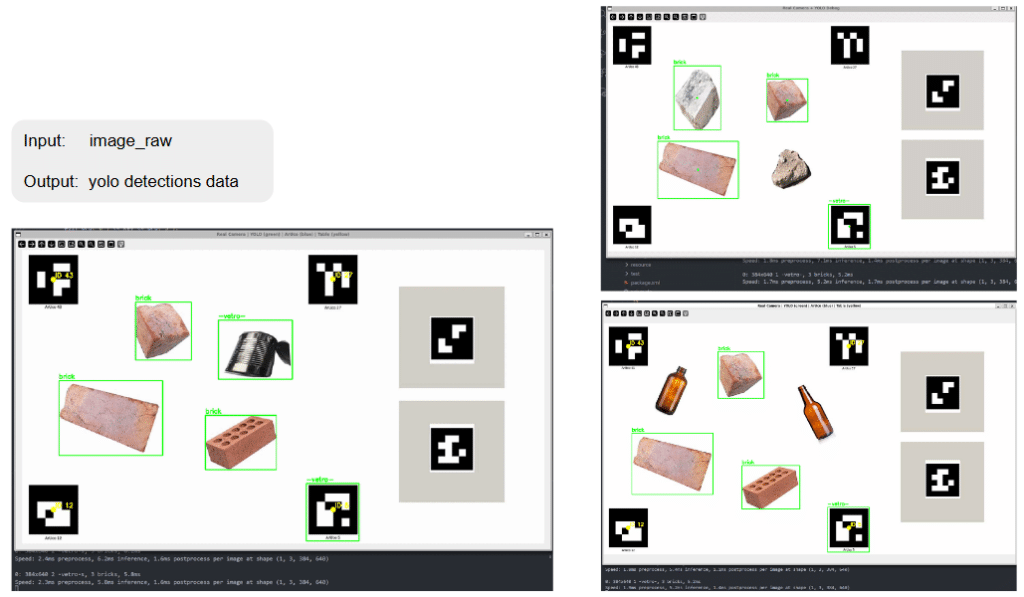

Real-Time Object Detection

Vision System

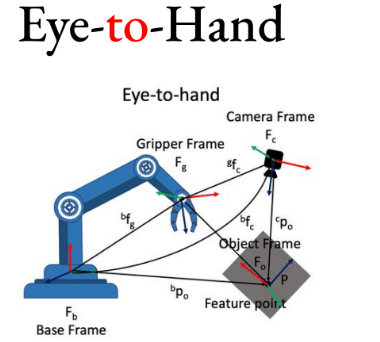

An RGB camera mounted on a fixed position overlooking the workspace and continuously captures images of the working area. This allows the system to monitor the materials in real time and provide visual data for the detection process.

Detection Pipeline

The captured images are sent to the AI detection model, which analyzes the scene and identifies different materials. During this process, the system performs several tasks:

- Live material identification from the camera feed

- Bounding box generation around detected objects

- Material classification labels (e.g., brick, concrete, plastic)

- Confidence score evaluation to estimate how certain the model is about each detection

The overall workflow of the system follows a simple pipeline:

Vision system → AI model → robotic control logic

Robotic Object Grabbing & Placement

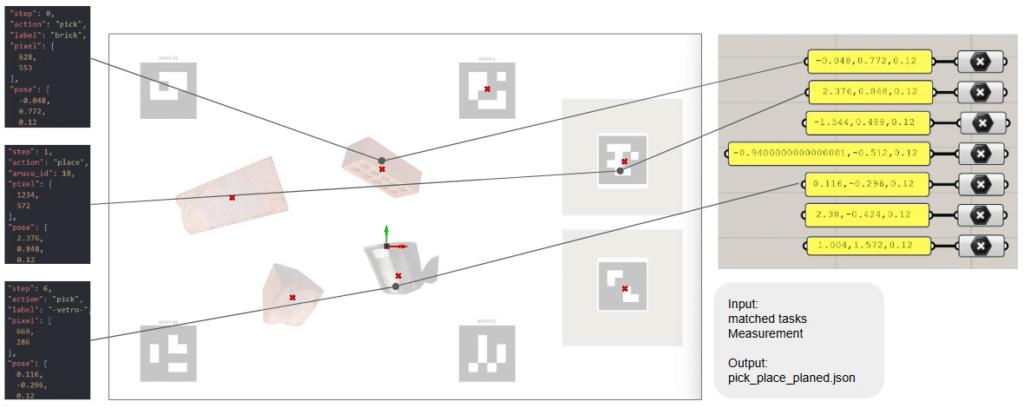

After the materials are detected by the vision system, the robotic arm performs the grabbing and placement tasks. The robot receives information about the detected objects and decides how to pick and place them correctly.

Robotic Logic

The robotic control system processes the information from the vision model and plans the movement of the robotic arm. The main steps include:

- Receiving object location from the vision system

- Receiving the material selection command for sorting

- Calculating an appropriate grasp point on the object

- Picking the correct object from the mixed pile of materials

Placement

Once the object is picked up, the robotic arm places it in the correct location based on its material type.

- Depositing the material into the assigned container

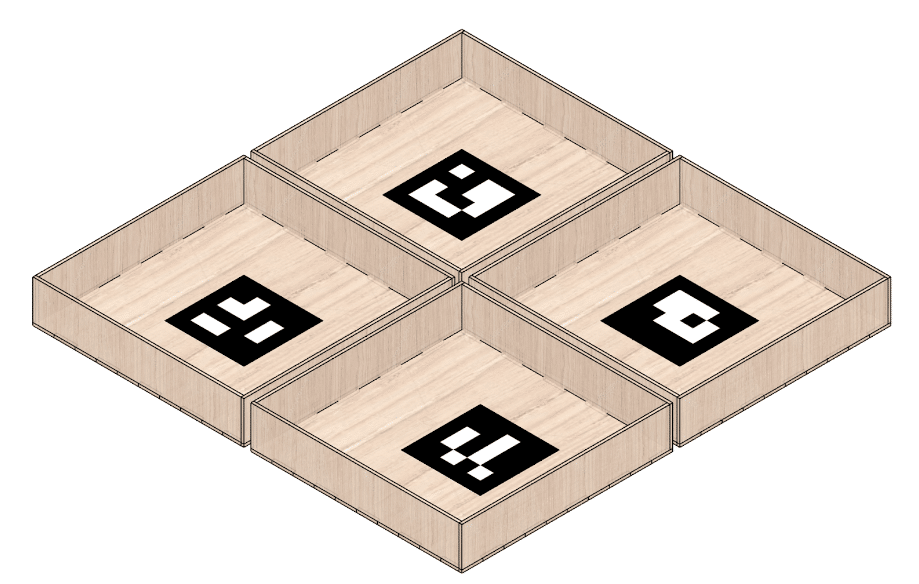

- Using grid-based positioning for organized transport or storage

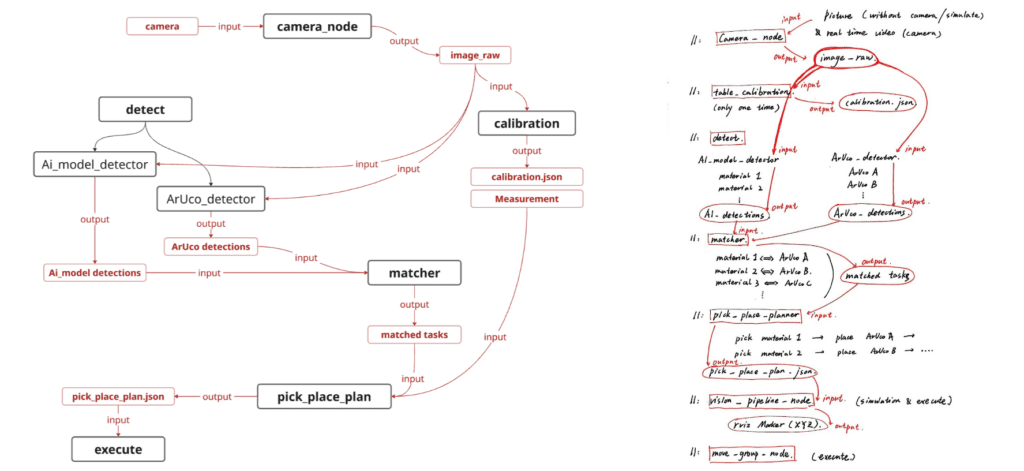

Framework: Vision-based Pick-and-Place Pipeline

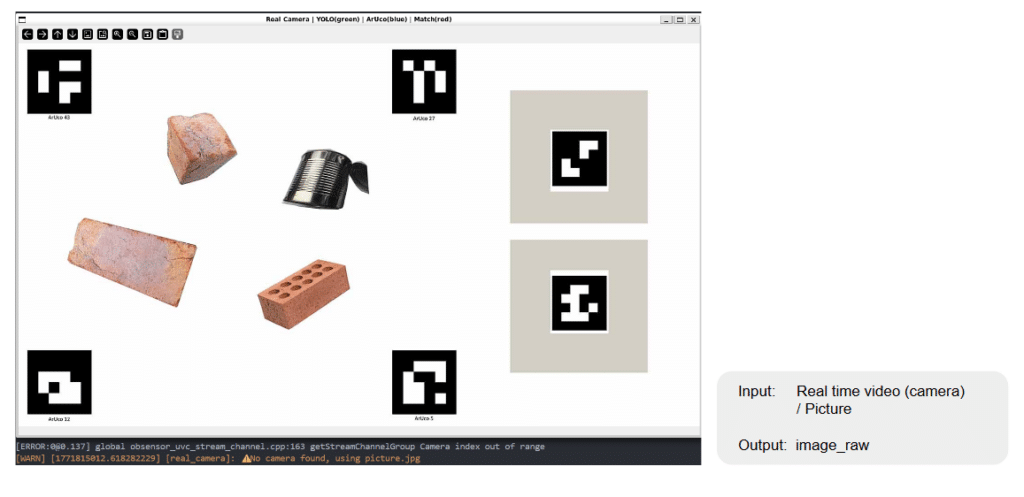

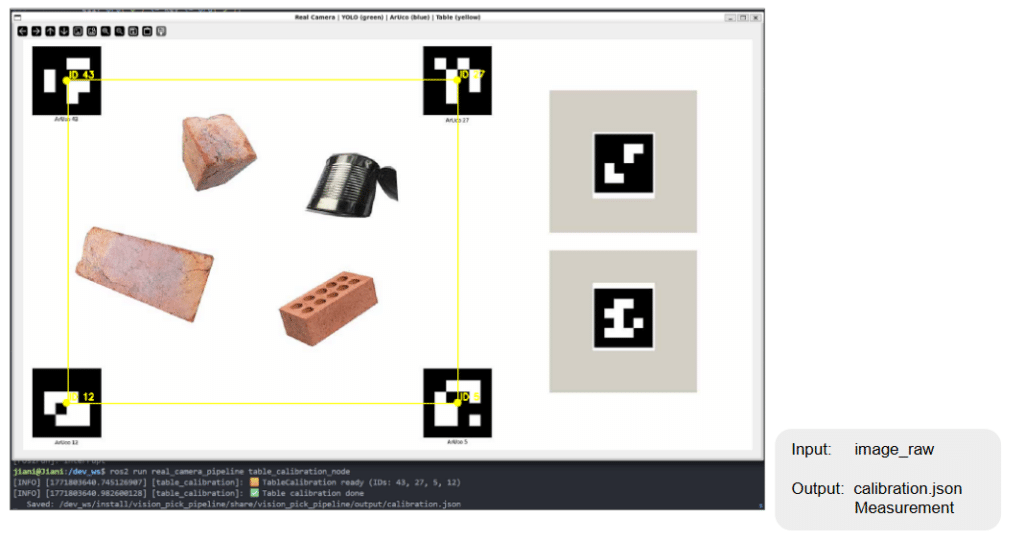

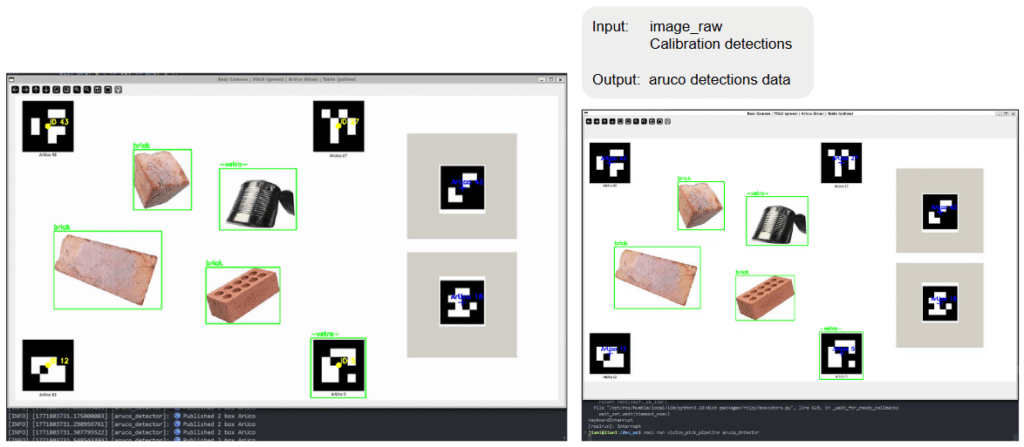

Our pick-and-place pipeline serves as the central nervous system of the project, seamlessly connecting digital perception to robotic action. The process begins at the Camera Node, where raw imagery is captured and aligned with the workspace through a one-time Table Calibration. This ensures that 2D visual data is accurately mapped to 3D physical coordinates.

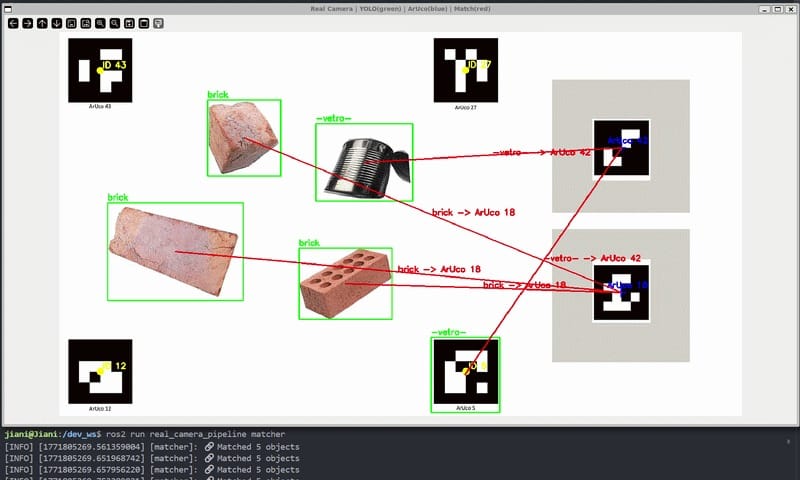

The “Detect” phase then runs a dual-track analysis: the AI Model Detector classifies material types, while the ArUco Detector establishes precise spatial markers. These data streams converge in the Matcher, which applies sorting rules to specific objects. Once tasks are matched, the Pick-and-Place Planner generates a final execution script. Before physical movement, the system validates the path in a digital environment, ultimately sending the command to the Move Group Node for precise manipulation. This structured framework ensures that every robotic “grab” is backed by intelligent, data-driven logic.

Robotic Object Grabbing & Placement

Camera Node

Calibration

Detect (AI_model_detector)

Calibration (ArUco_detector)

Match

Pick and Place Plan

Gap to Real

Results, Limitations & Improvements

Achievements

- Successful real-time material detection

- Automated sorting workflow

- Functional human-AI collaboration

Limitations

- Dataset size

- Material surface similarity

- Edge cases (damaged or mixed materials)

Future Improvements

- Larger and more diverse datasets

- Additional material classes

- Full autonomy mode

- Integration with material reuse databases

- CO₂ and cost impact calculations