RE:PAIR –Perception-Driven Closed-Loop Robotic Repair

Faculty: Hamid Peiro and Aleksandra Kraeva (Sasha)

Mission Statement:

RE:PAIR is a perception-driven, decision-visible closed-loop robotic system that detects cracks, repairs them using robotic 3D printing, and autonomously verifies the result.

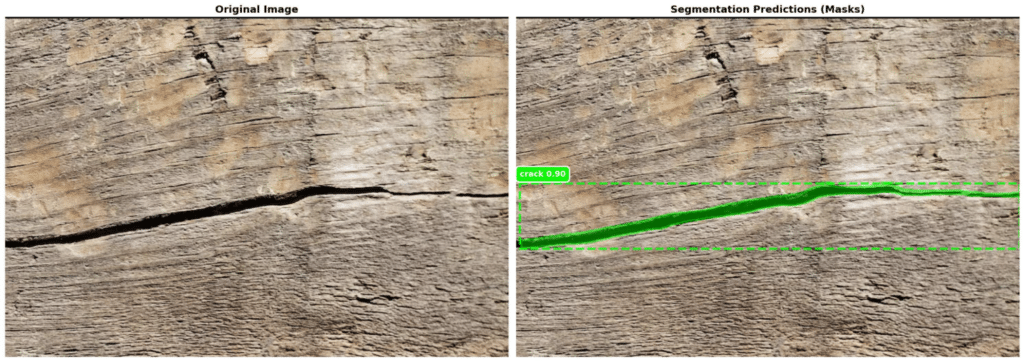

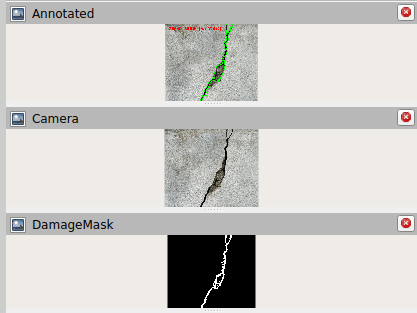

1) Crack detection 2) Segmentation and Masking

3) Centreline and points

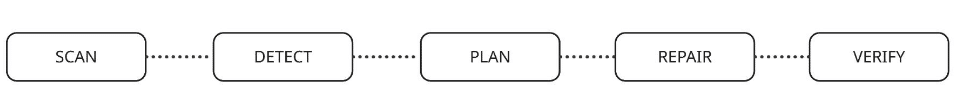

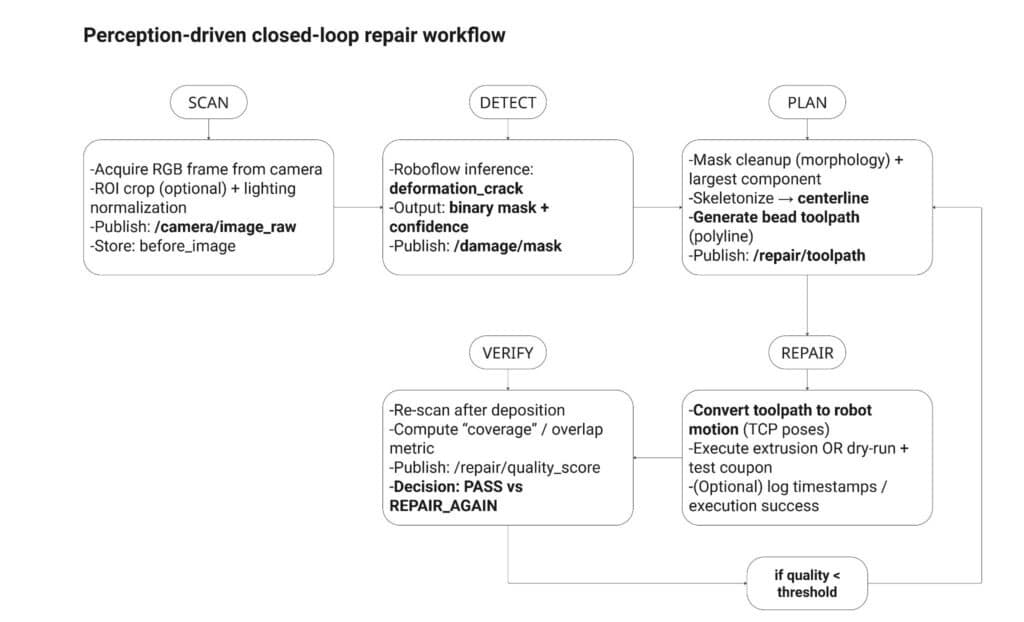

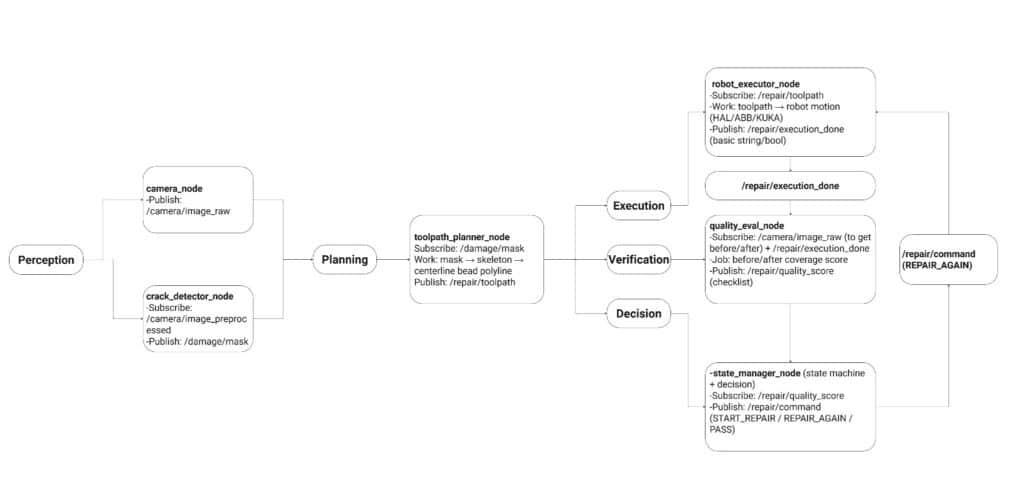

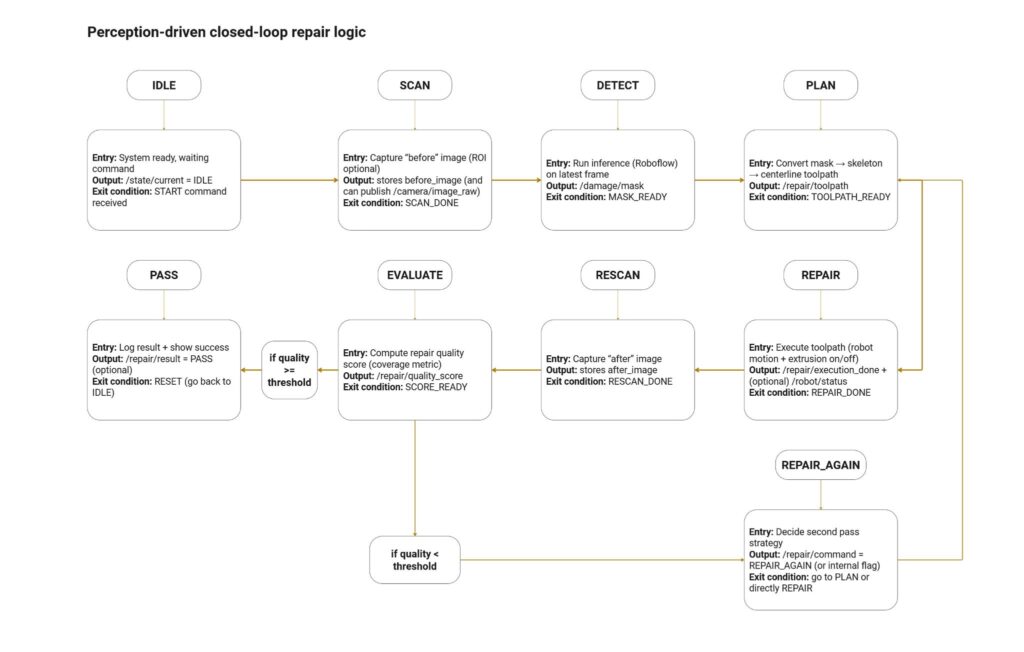

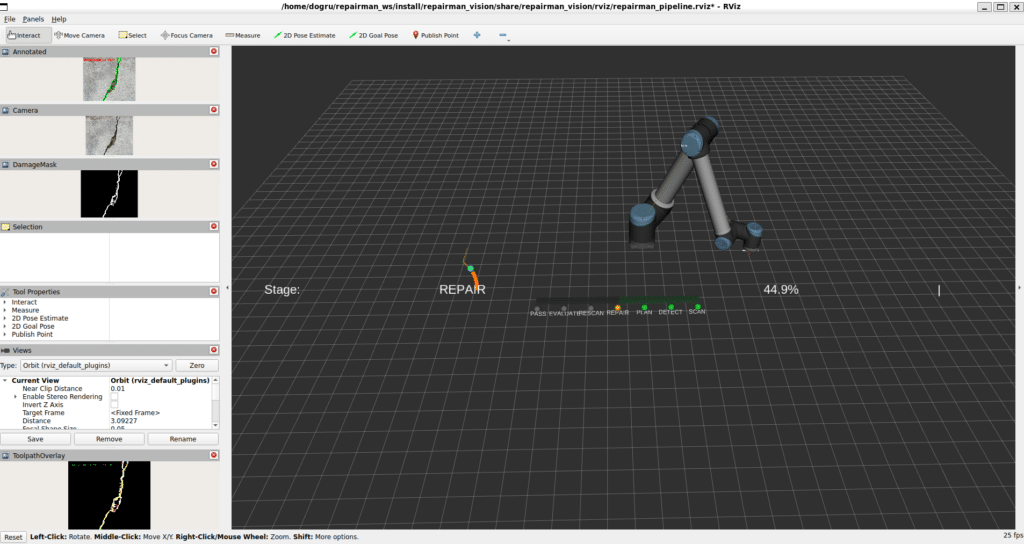

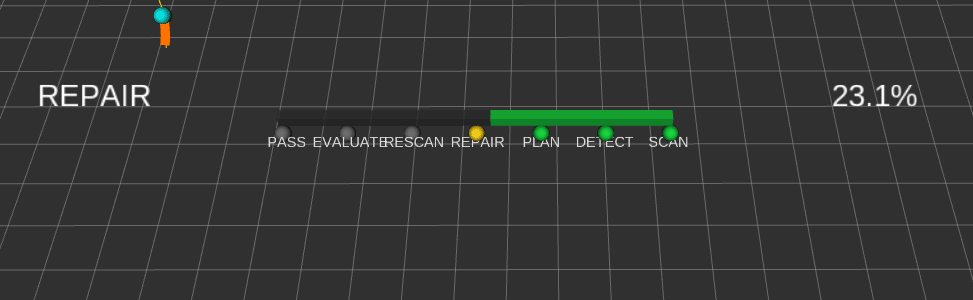

First we outlayed the workflow of the project. The node architecture within ROS and the loopflow of the perception logic itself.

Workflow

ROS2 System Architecture

Perception loop

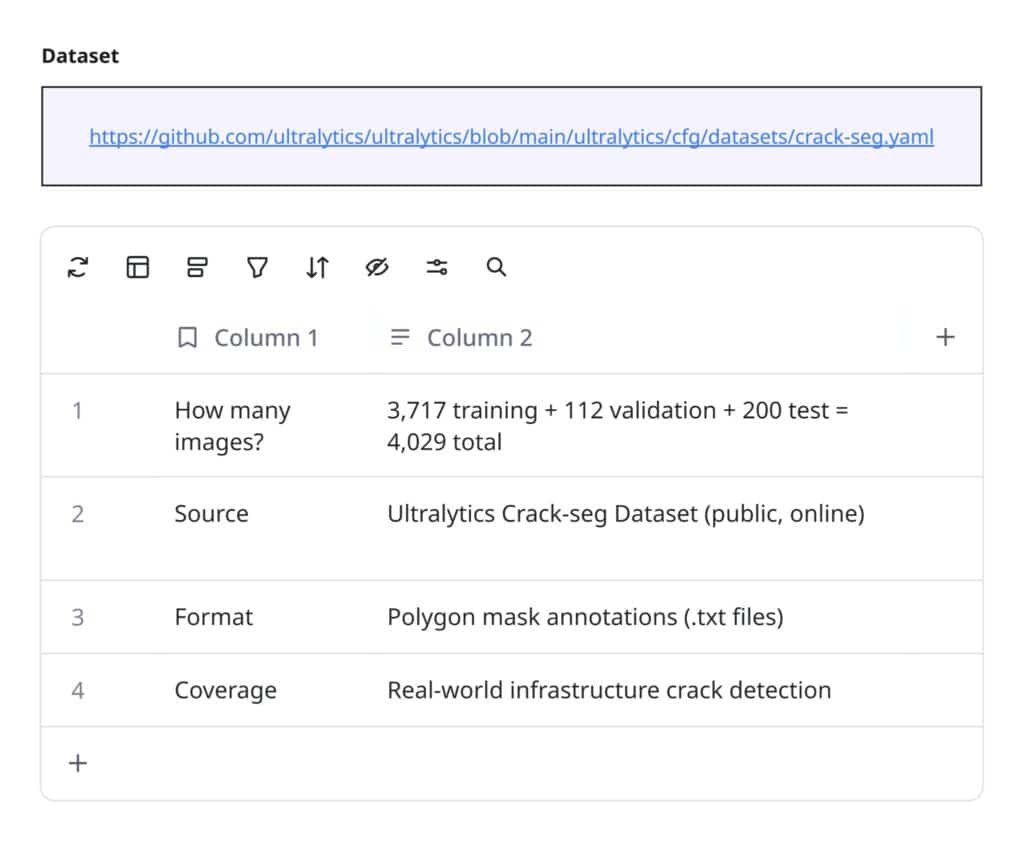

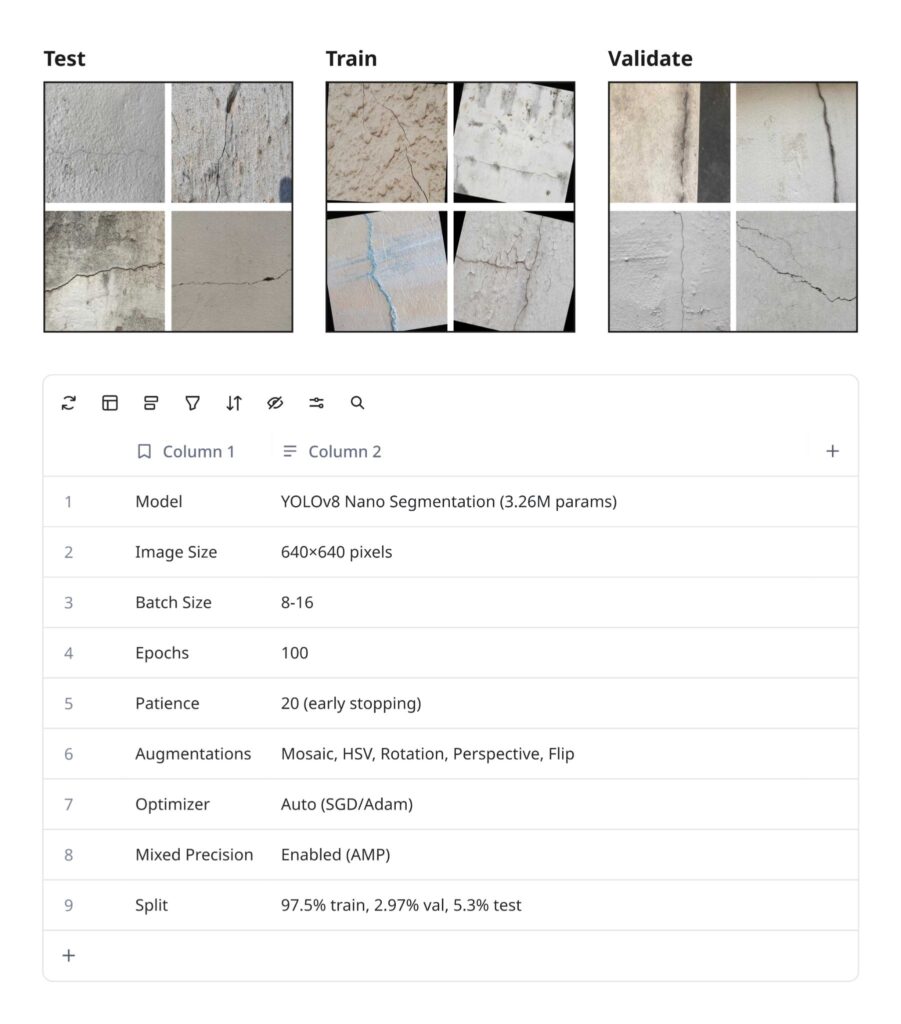

Creating a YOLOv8 Model

We then began to build our crack detection model with an initial 4000 images input in Roboflow. The first output was the simplest binary argument of whether the model could detect if a crack was present or not. In the future these could be further refine to define more nuanced categorisations. Such as depth level, the type of crack and whether this includes other models such as dents or voids.

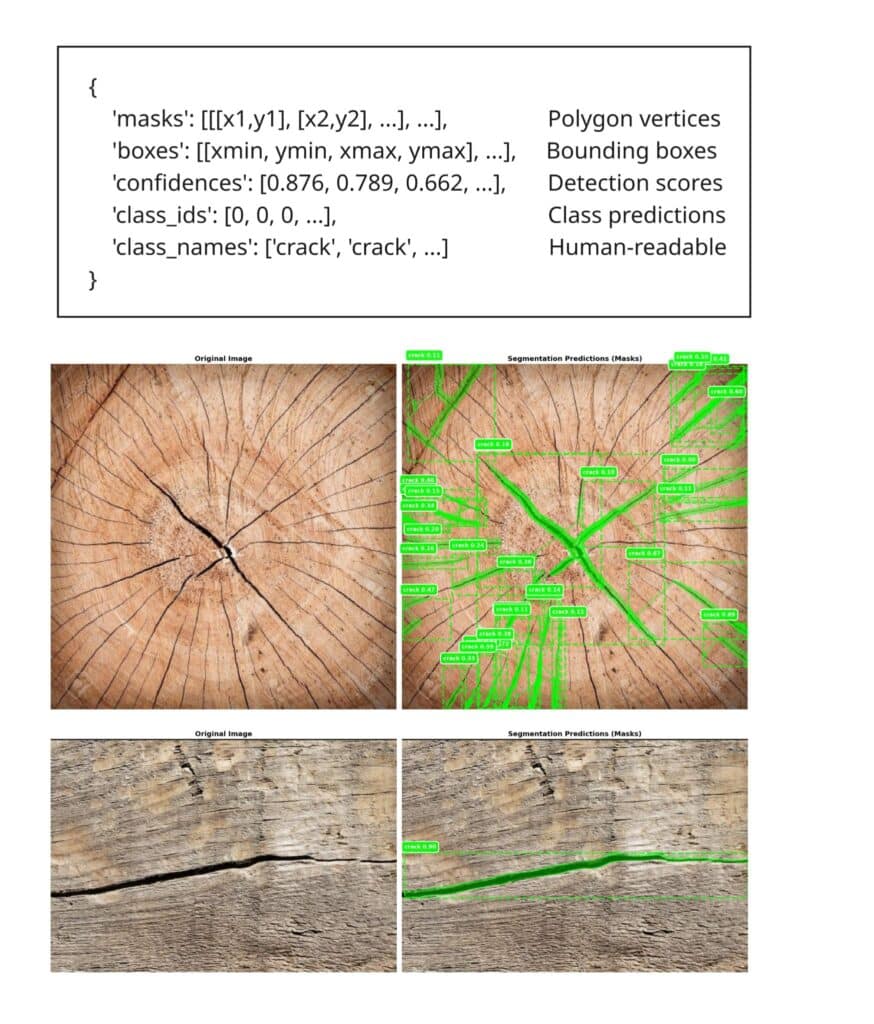

ROS2 Approach

Once we had our working model which could detect in real time we were able to look at developing our ROS pipeline. This involved taking the camera feed and intergrating in our ML model. This then produced the mask, from which we could get centrepoints and an accurate path for the robot to follow.

Using RViz (Robot Visualisation) we were able to confirm that in a digital twin the Robot was following the correct mask path and that was not assuming any strange joints or kinematic solutions which could cause the robot to collide.

We were then able to the link to the URE-1O and with a raised Z-Axis confirm this would happen in a dry-run.

Grasshopper Approach

As we initially unsure if we would be able to use the ROS pipeline we also a developed as a Grasshopper simulation with the same logic as taking the centrepoints from the mask to produce a path.

However as Grasshopper cannot directly import ML models we used Flask which is a very basic Python Web-Framework as a middle-man. This allowed us to use HTTP as an agreed language between the ML model and Grasshopper and import the curve mask for each.

(in clipping the video the first few robots go to the start so look like they are missing the path but they did then use the correct path in cases)