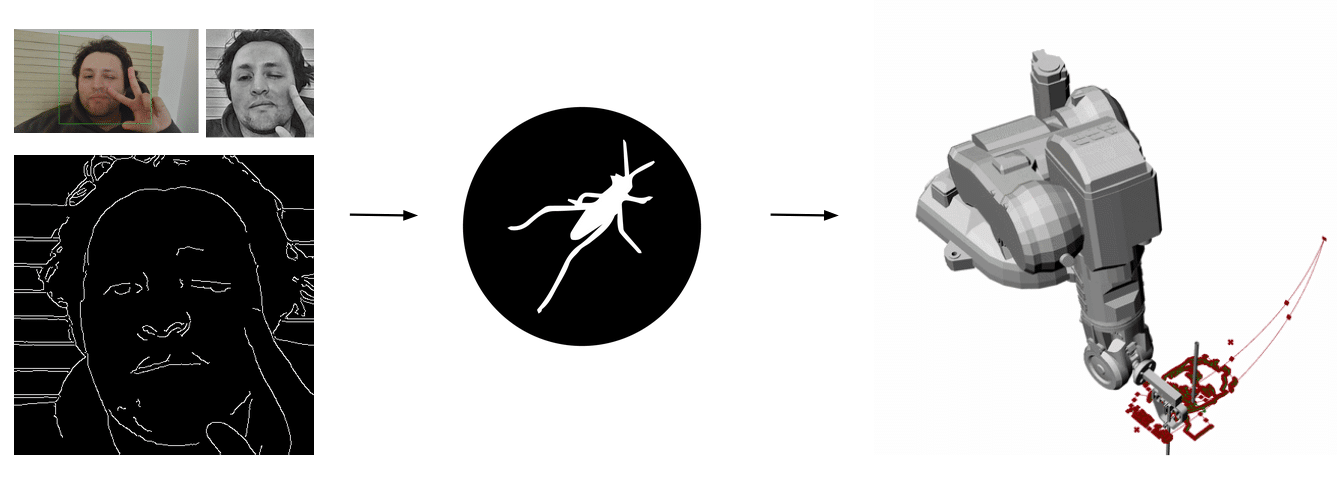

This project explores a robotic system that translates digital vision into physical matter through the constraint of continuity. Rather than behaving like a conventional plotter that freely lifts and re-positions its pen, the system confronts a more complex challenge: it must interpret image topology and compute a path that allows the entire drawing to be executed in a single, uninterrupted line. By solving this continuous-line constraint, the robot transforms pixels into motion, data into choreography, and vision into a fluid physical trace revealing how computational logic can shape material expression.

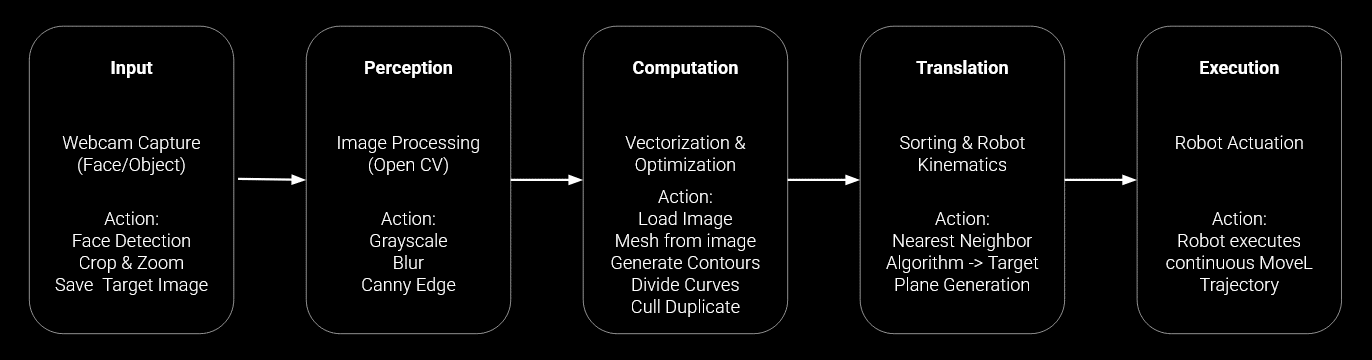

WORKFLOW

This workflow outlines a complete pipeline that transforms visual input into a continuous robotic drawing. It begins with Input, where a webcam captures a face or object and performs detection, cropping, and target image saving. In the Perception stage, the system applies image processing techniques in OpenCV grayscale conversion, blur filtering, and Canny edge detection to extract meaningful structural information. During Computation, the image is vectorized and optimized by generating contours, dividing curves, removing duplicates, and preparing a drawable mesh. The Translation phase then reorganizes this geometry through nearest-neighbor sorting and robot kinematic mapping, generating a feasible target plane and motion path. Finally, in Execution, the robot actuates a single continuous movement trajectory, physically materializing the processed vision without lifting the drawing tool, closing the loop between perception, computation, and embodied action.

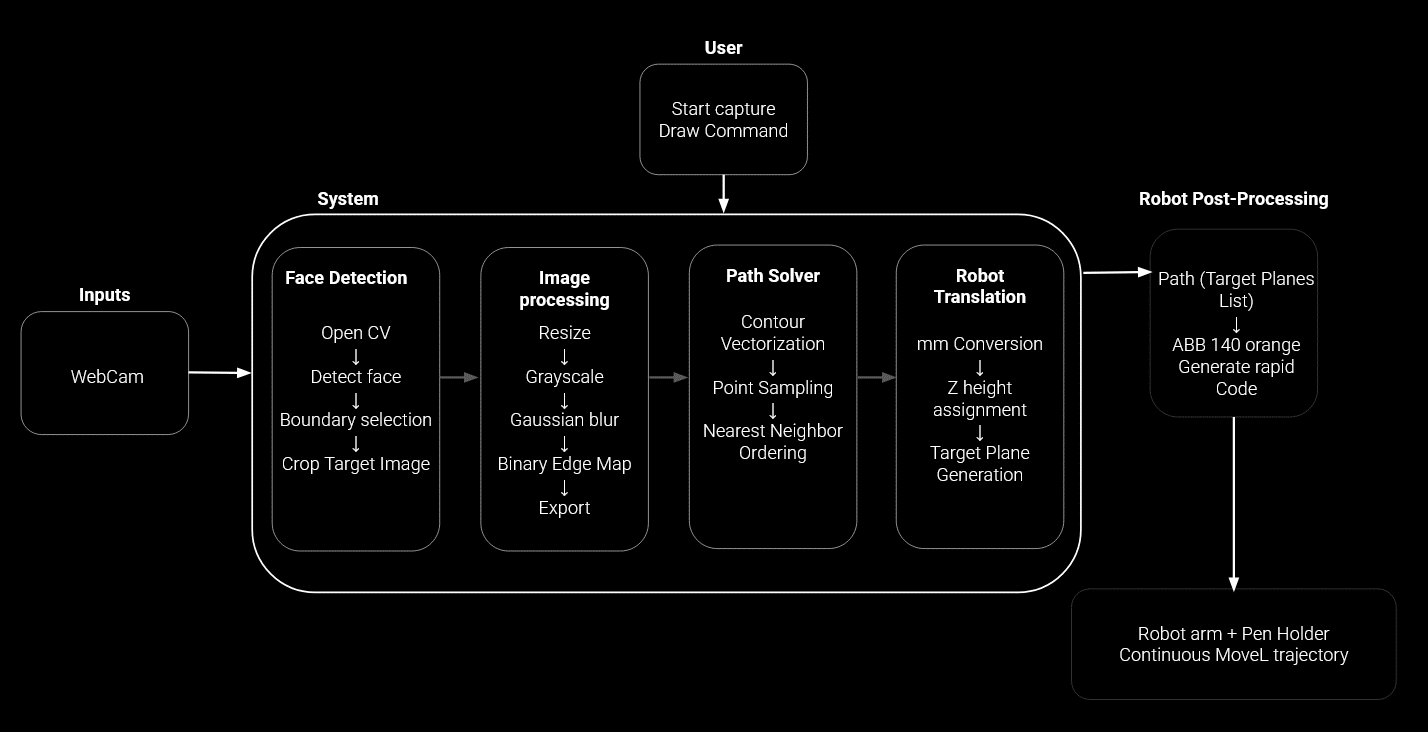

Algorithmic Process

This system defines a seamless pipeline that transforms a simple user action into a continuous robotic drawing. Once the user initiates capture and sends the draw command, the webcam feeds live input into the system, where OpenCV detects the face, selects boundaries, and crops the target image. The image is then refined through resizing, grayscale conversion, Gaussian blur, and binary edge extraction to reveal its essential structure. From there, the path solver translates visual information into geometry by vectorizing contours, sampling points, and intelligently ordering them through a nearest-neighbor strategy to preserve continuity. The resulting path is mapped into robotic space via millimeter scaling, Z-height assignment, and target plane generation. In the final stage, the trajectory is converted into ABB RAPID code and executed as a smooth, uninterrupted MoveL motion by the robotic arm turning digital perception into a fluid physical trace without ever breaking the line.

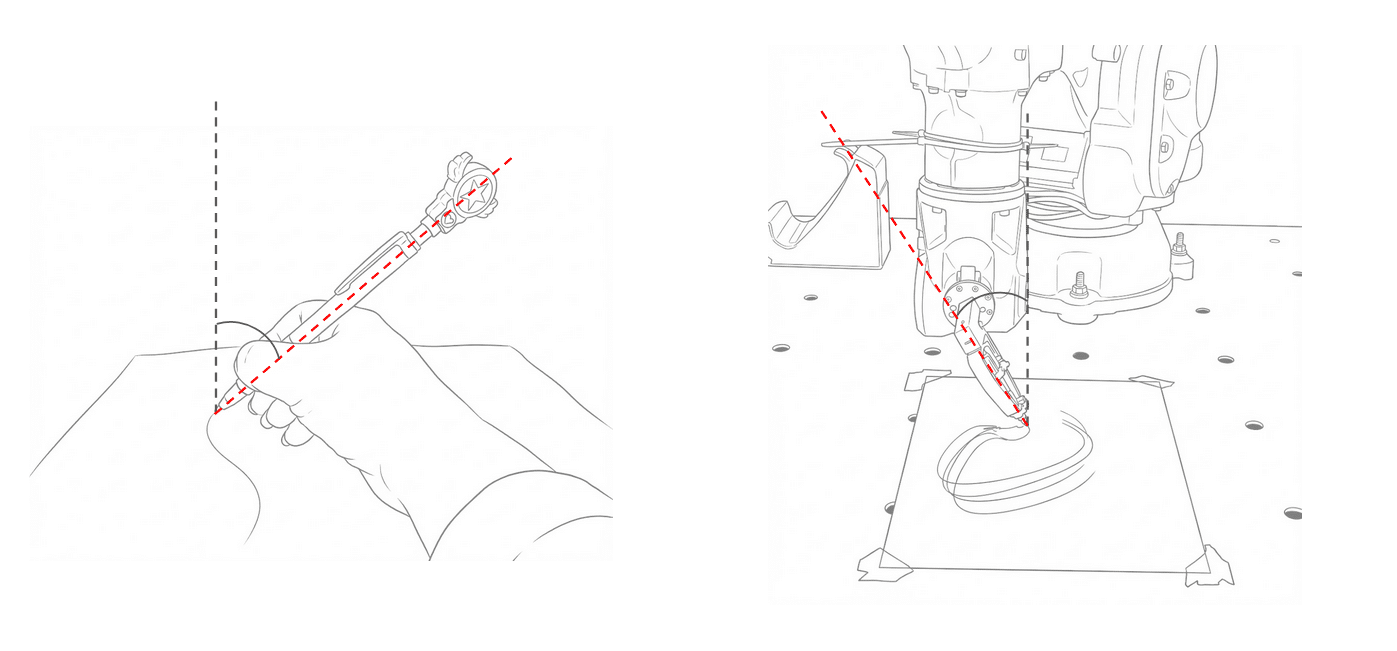

Considerations for the Robot

To achieve fluid simple strokes, the robot’s end-effector is programmed to mimic the natural human drawing angle of 45° to 60°.

Results

The results reveal not just robotic precision, but a shared moment where algorithm, machine, and human expression converge into a single continuous line.

Next Steps

Moving forward, the project focuses on enhancing both technical precision and artistic expression. Path optimization algorithms will be refined to reduce unnecessary crossings and produce smoother, more efficient continuous strokes. Vision robustness will be strengthened through standardized lighting conditions and advanced preprocessing techniques such as CLAHE to ensure consistent facial feature detection. To expand expressive potential, image darkness can be mapped to the Z-axis, enabling dynamic line weight through controlled pen pressure. An interactive user interface will allow real-time preview and detail adjustment, making the system more intuitive and participatory. Additionally, experimenting with different end-effectors such as brushes or engraving tools will open new material possibilities. Finally, integrating a lightweight generative AI model for stylistic preprocessing will enable the transformation of portraits into distinct artistic interpretations before path computation, deepening the dialogue between computation and creativity.