Team member(s): Elias, Rafik, Seid, Leo and Dhruvil

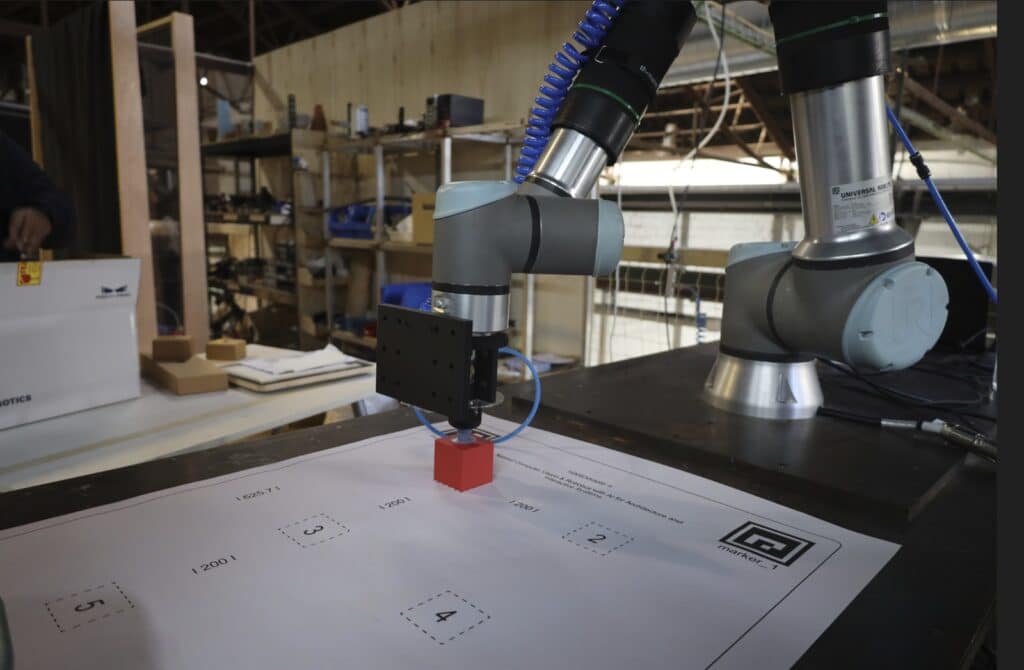

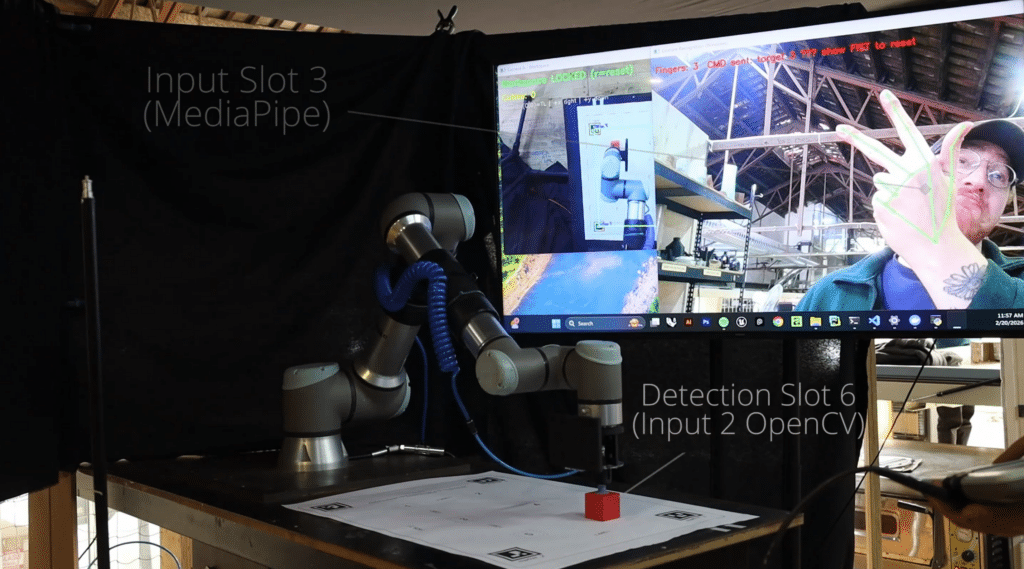

This blog post presents a vision-based gesture-controlled robotic manipulation system developed for intuitive human-robot interaction. The project replaces traditional robot programming interfaces — such as teach pendants and GUIs — with a natural hand gesture pipeline, allowing a human operator to direct a UR10e industrial robotic arm through pick-and-place tasks using vision alone.

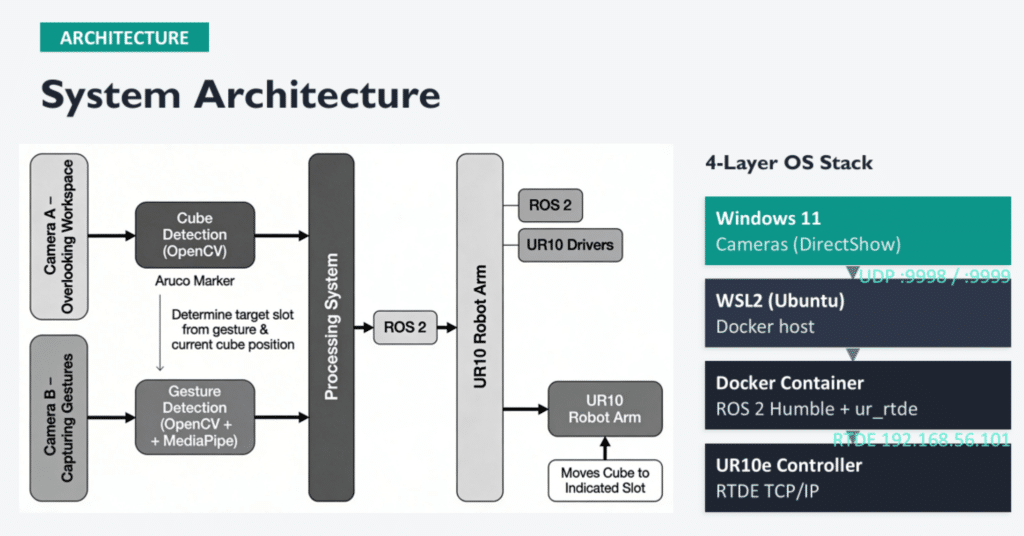

The system is built around three tightly integrated layers:

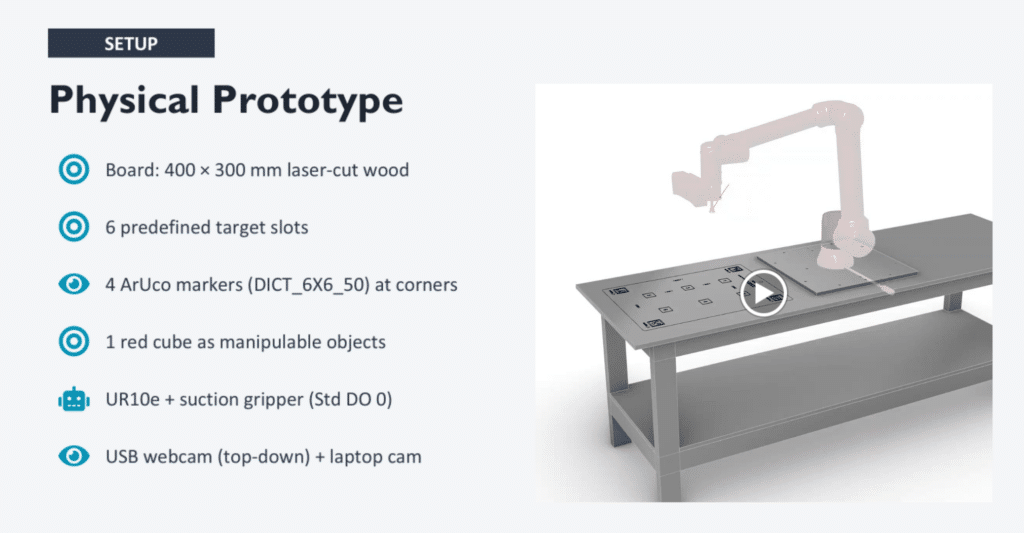

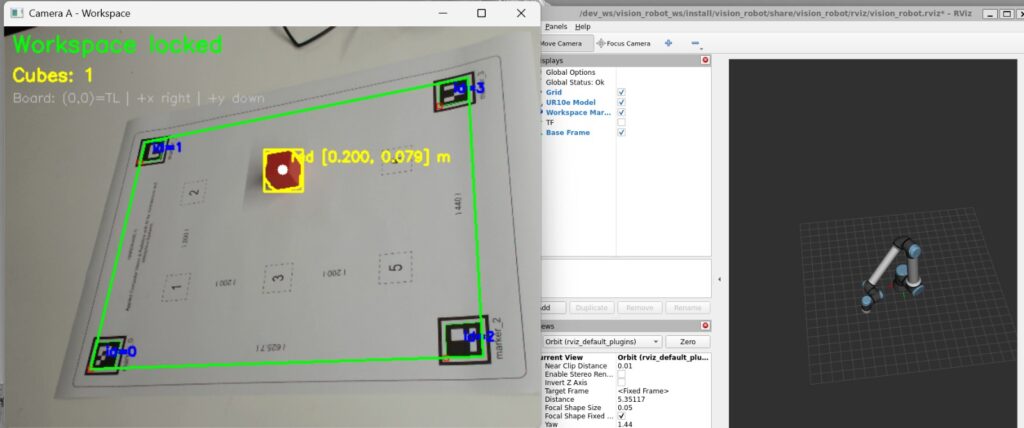

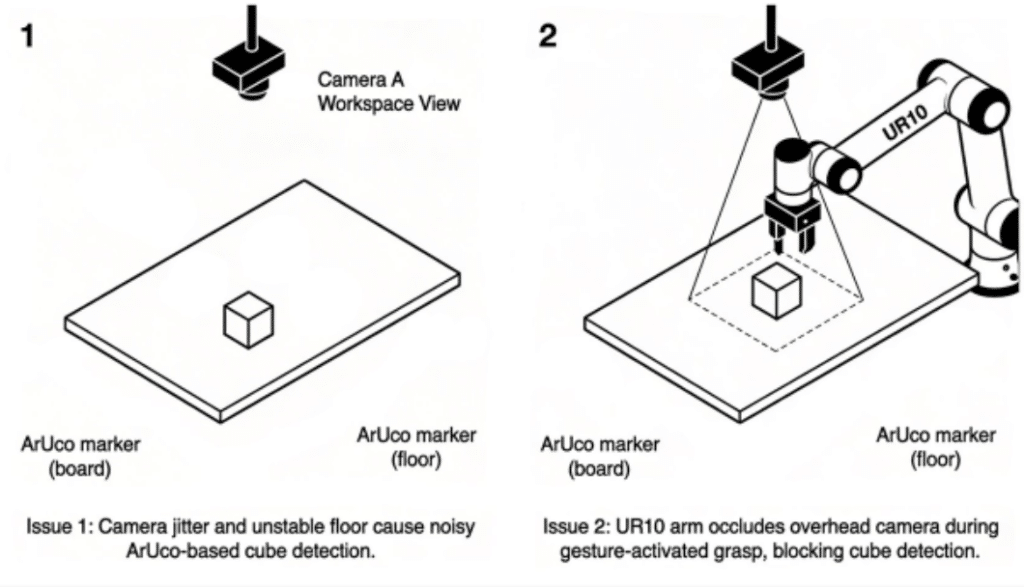

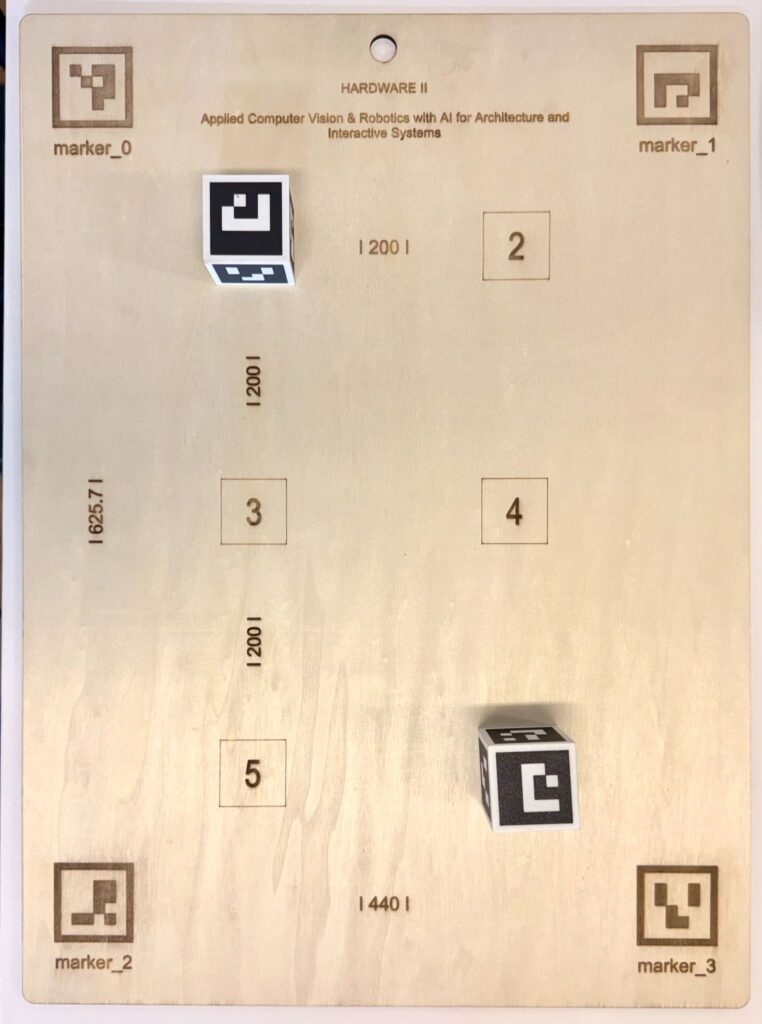

- Workspace Perception: An overhead USB webcam (Camera A) detects four ArUco markers on a 400 × 300 mm laser-cut board to establish a homography-based coordinate frame. Red cubes are identified through HSV color segmentation, and their centroids are mapped from pixel space to board coordinates in meters, then transformed to robot-frame positions. The homography is locked after first detection to prevent jitter, and cube positions are smoothed using exponential moving average filtering with 5 mm snapping.

- Gesture Recognition: A second camera (Camera B) captures the operator’s hand using Google’s MediaPipe Hands framework. A finger-counting algorithm classifies gestures from 0 to 6, determining the target slot for placement. A 20-frame stabilization buffer prevents false detections, and a fist reset gate ensures intentional command sequencing — the operator must show a fist between consecutive commands.

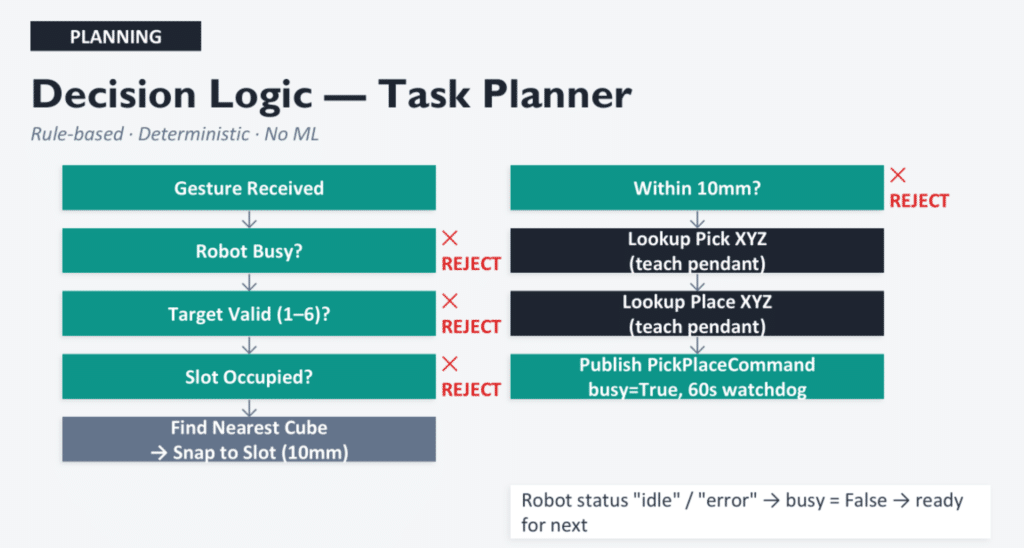

- ROS 2 Bridging and Robot Execution: Camera and gesture data are streamed via UDP from Windows to a ROS 2 Humble environment running inside Docker on WSL2. Bridge nodes convert UDP streams into ROS 2 topics, a rule-based decision node validates commands (checking target range, slot occupancy, and cube proximity), and a robot control node executes an 8-step pick-and-place sequence via RTDE — including safe travel heights, suction gripper activation, and failure recovery.

Key engineering challenges included poor ArUco detection on laser-engraved markers (solved by switching to printed markers), gesture noise (addressed through frame-based stabilization and fist reset protocols), and camera occlusion during robot motion — an accepted limitation where the UR10e arm blocks the overhead camera mid-pick.

The system was successfully demonstrated end-to-end: a fully deterministic, explainable pipeline with no black-box machine learning, where each component is independently replaceable within the modular ROS 2 architecture.

Tags: Robotics, Computer Vision, Human-Robot Interaction, ROS 2, Gesture Recognition, UR10e, MediaPipe, OpenCV, Pick-and-Place

Vision-Based Gesture-Controlled Robotic Manipulation System is a project of IAAC, Institute for Advanced Architecture of Catalonia developed in the MAAi & Master in Robotics & Advanced Construction 02 – 2025/2026 by the student(s) Elias, Rafik, Seid, Leo and Dhruvil during the course Hardware II with Hamid Peiro, Marita Georganta and Sasha Kraeva.