PROBLEM

Maximizing energy efficiency and comfort in buildings with strategically designed and placed sun shades for optimal solar heat reduction is becoming crucial in cities especially in arid zones.

This raises an important question how can we use new technologies in achieving such goals.

ML MODEL SELECTION

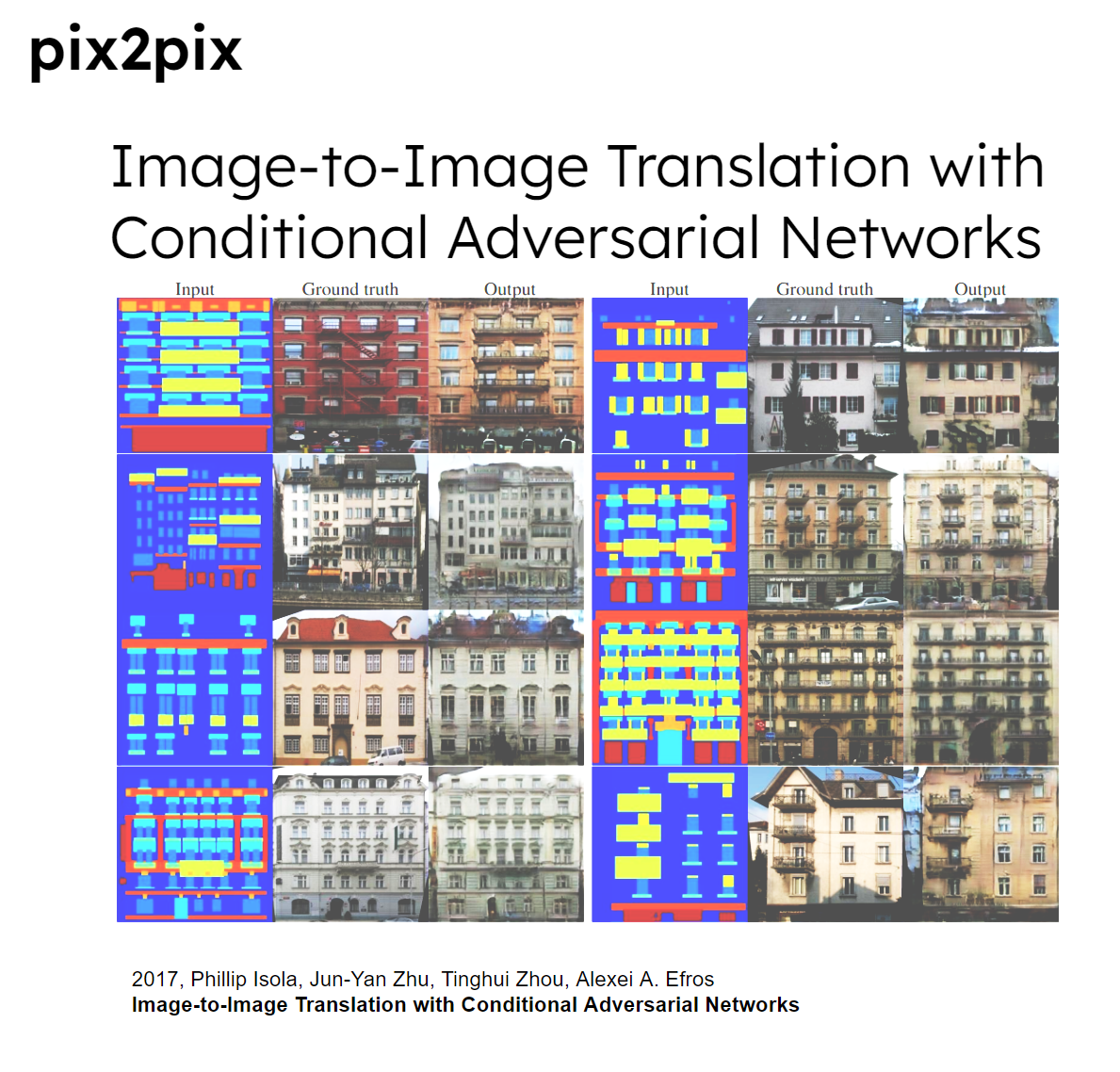

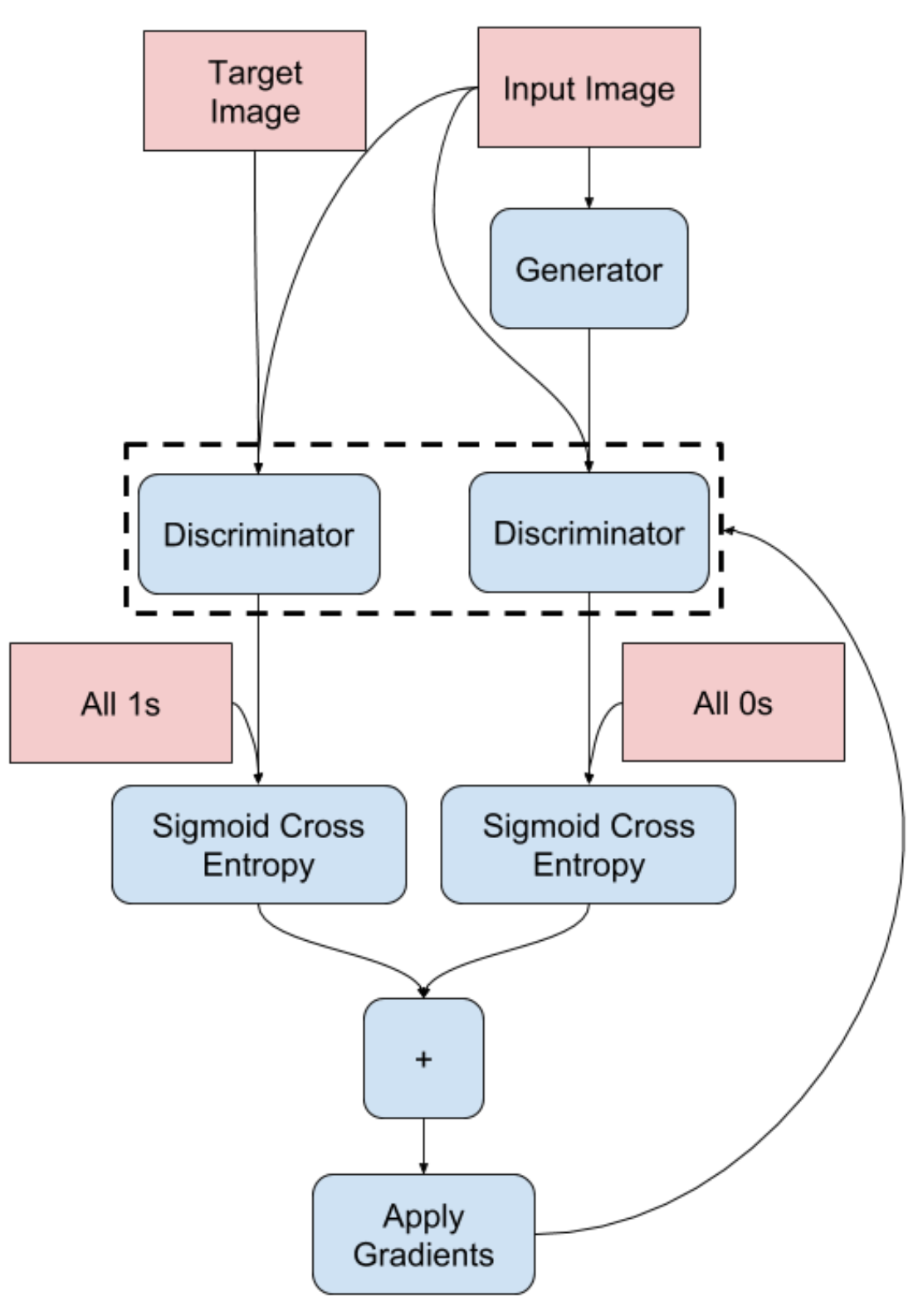

in 2017 a very interesting model using Image-to-Image Translation with Conditional Adversarial Networks was released These networks not only learn the mapping from input image to output image, but also learn a loss function to train this mapping. Commonly known as pix2pix model

OBJECTIVE

With Pix2Pix amazing performance in image to image translations we aim to explore its ability to capture relationships in architecture using sparse geometry instead of dense geometry using, geometry to image to geometry translation algorithm developed inside grasshopper. To build a model model that utilizes building geometry as input to automatically generate sun shades on buildings facades

With this in mind we were curious to try similar approach to achieve our goal using geometry to image to geometry algorithm we decided to explore pix2pix potential, to build a model that can automatically generate sunshades on buildings façade.

SELECTION LOCATION

Cairo, Egypt was chosen to be the case study

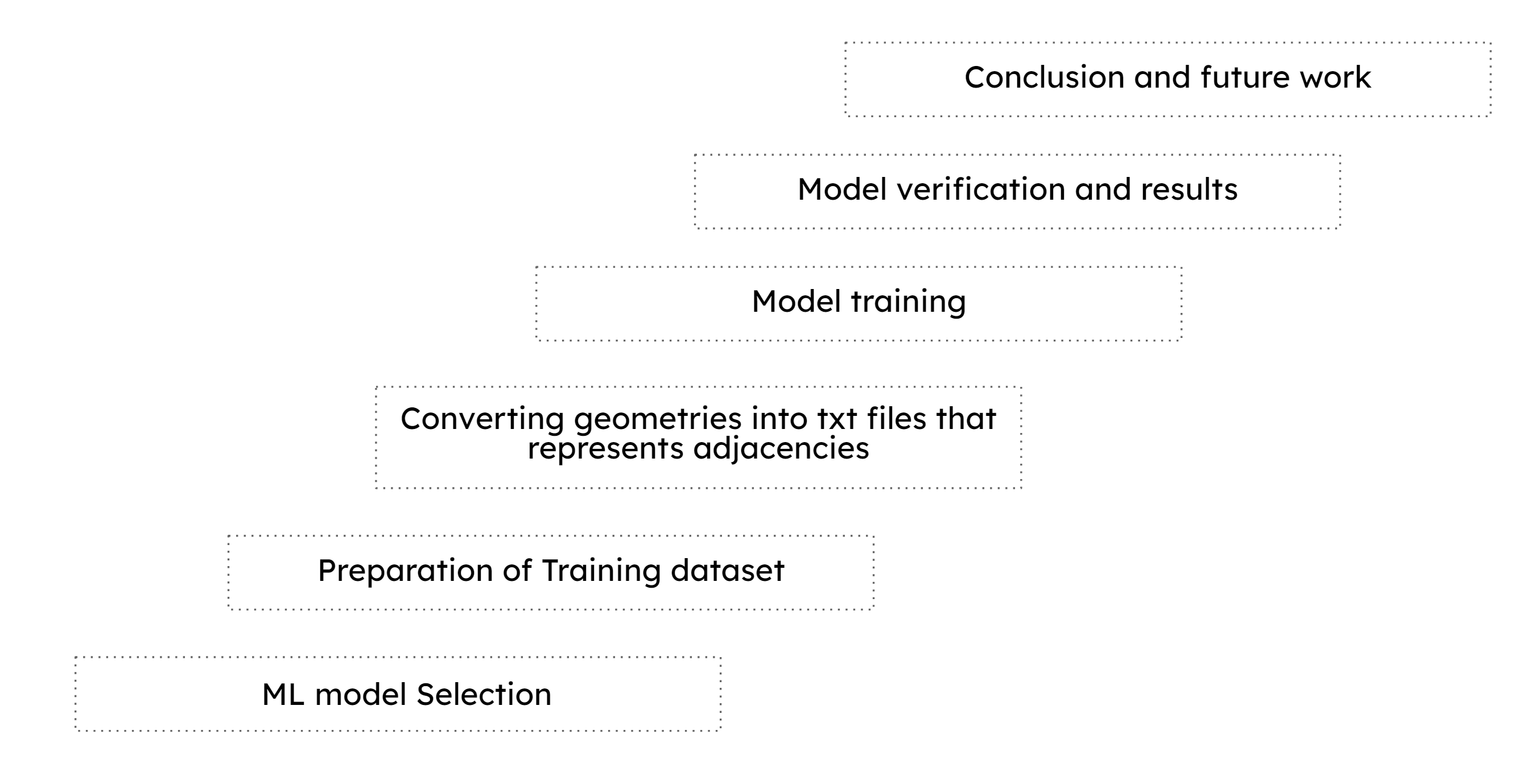

WORKFLOW

Dataset preparation

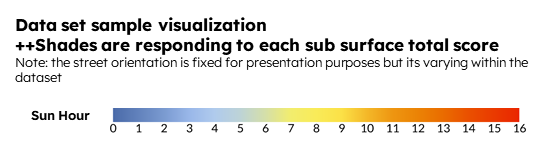

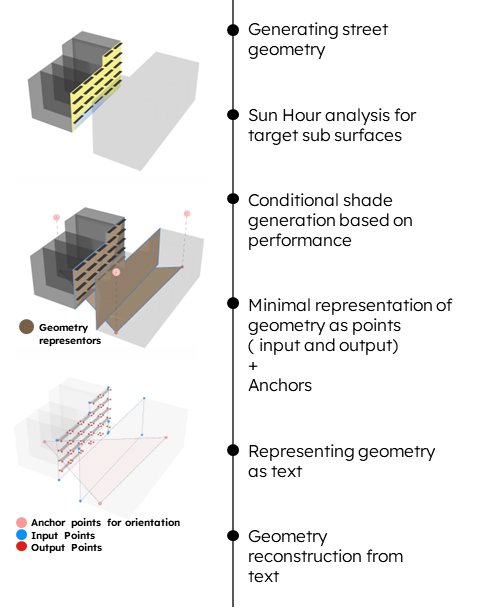

We started generating our dataset using a 30-meter street portion with building from both sides, buildings heights, street orientation and street width are varying randomly to ensure good data sampling.

We then use one side as a target side to place the shades interactively based on sub surfaces performance, The higher the sun hours the larger the extrusion by 2.5 meters as maximum extrusion.

Model Parameters

- Random buildings height

- Street orientation

- Street width

- Street Length is fixed to 30 m

Model Limitations

- Maximum height = 5 floors * 3.5 m

- Maximum street length 30 m

- Street orientation is available in 15 degrees intervals from 0 to 345

- Location is in Cairo, Egypt

Input and output geometries

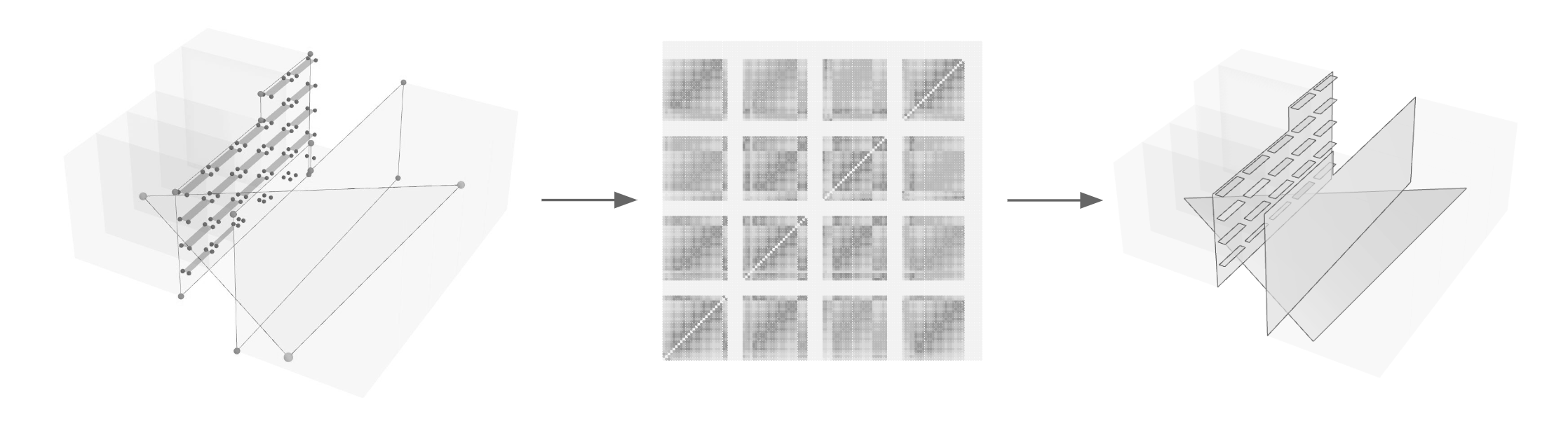

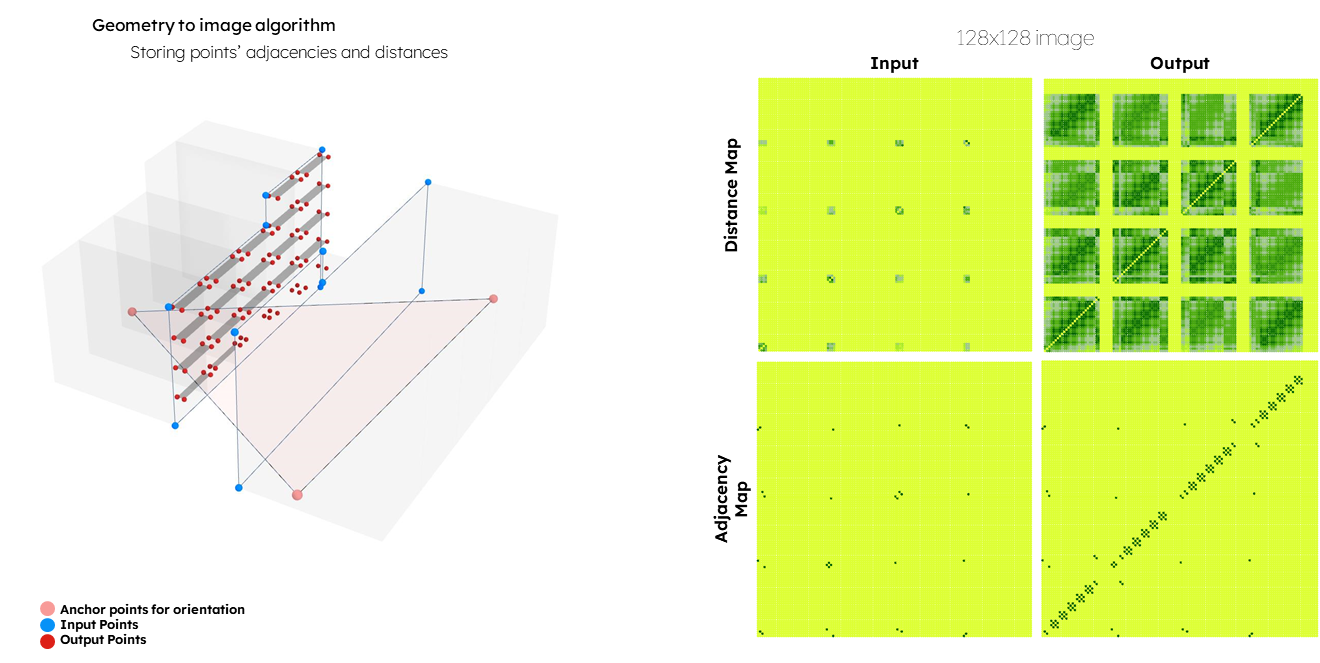

Now it’s time to define our input and output geometries, in our case we wisely represented our geometry using only street facing surfaces, classifying them into input “buildings” and output “shades” + fixed triangle encoded as input for geometry reorientation.

These input output points are then stored as txt files capturing adjacency and distance between points on input and output levels, this txt files are later converted into images for ML model training.

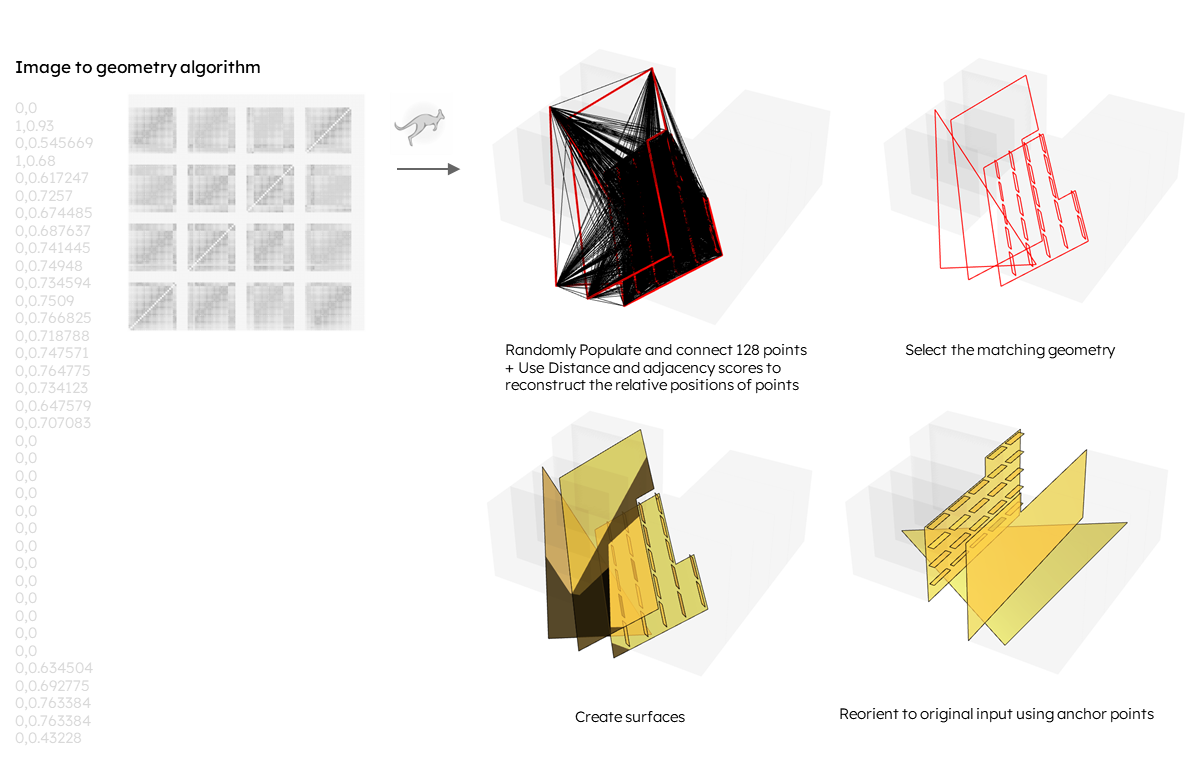

One last step is to define the reconstruction algorithm, where we use the txt files with kangroo plugin to reconstruct points and relations based on stored values, then reorienting the geometry using the anchor triangle.

Model training

Using 3000 dataset samples the model was trained for around than 1.5 million steps, taking around 48 hours of continuous training. At the beginning of the training we saw the discriminator loss fall very low and again raised to converge while the generator loss was only sloping downwards during most of the training process.

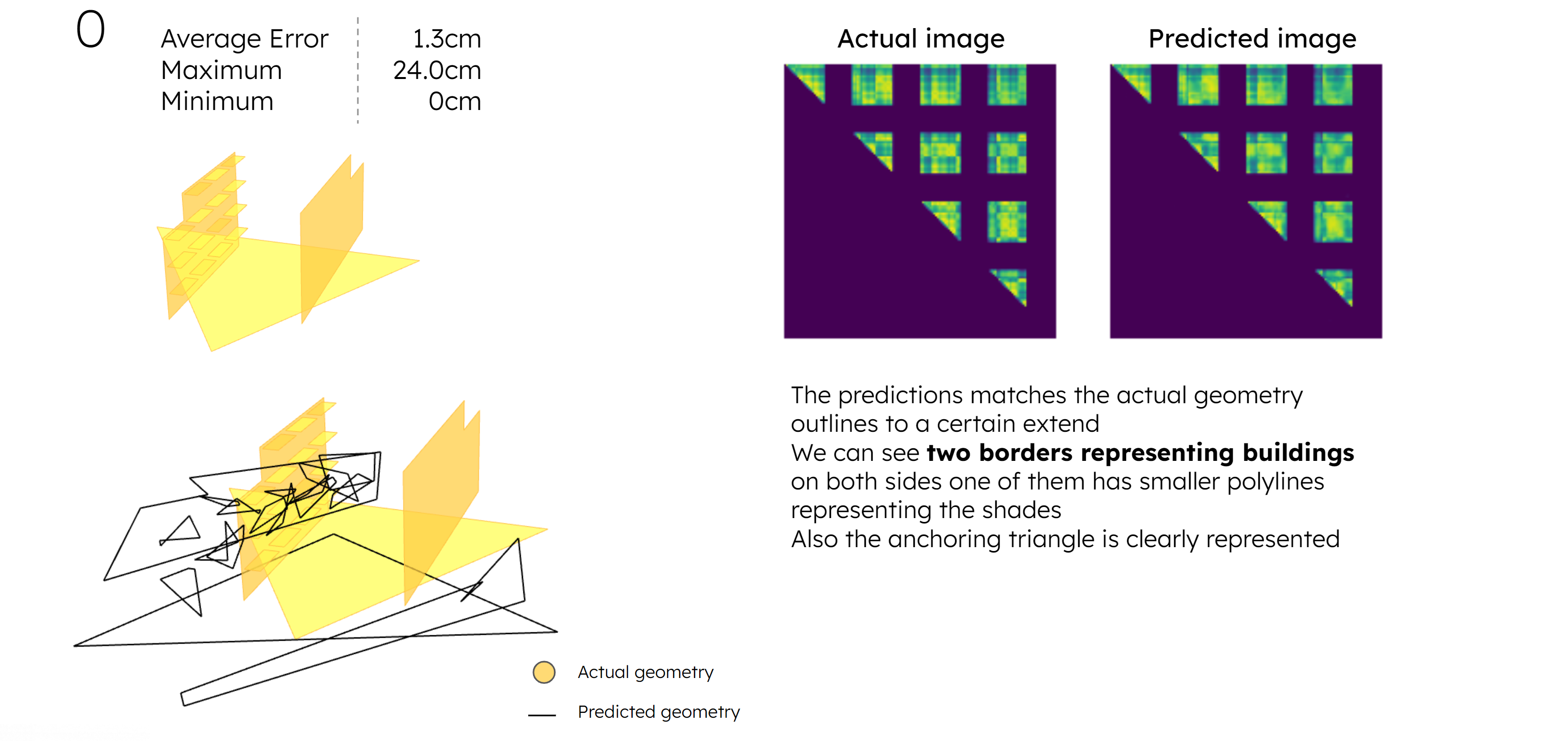

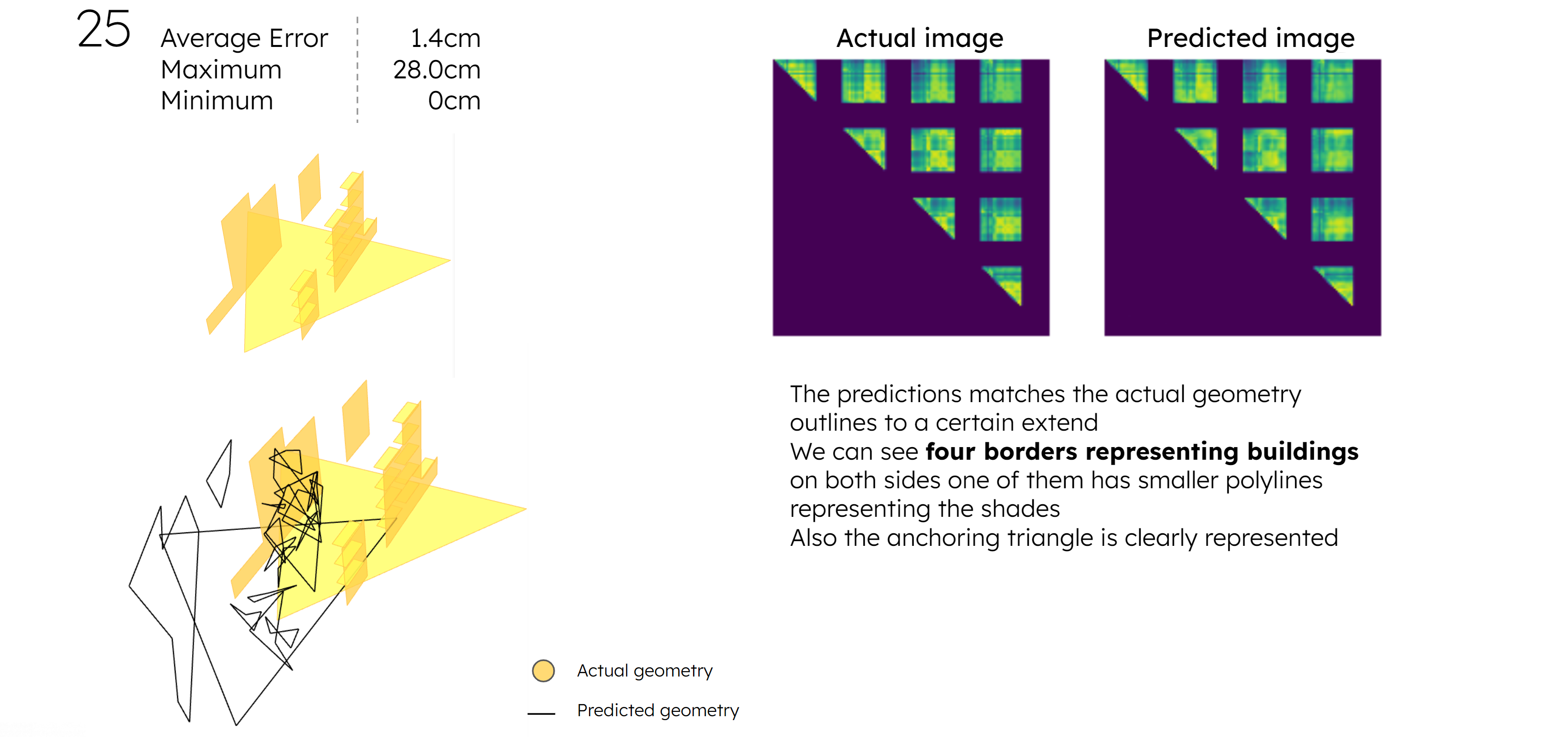

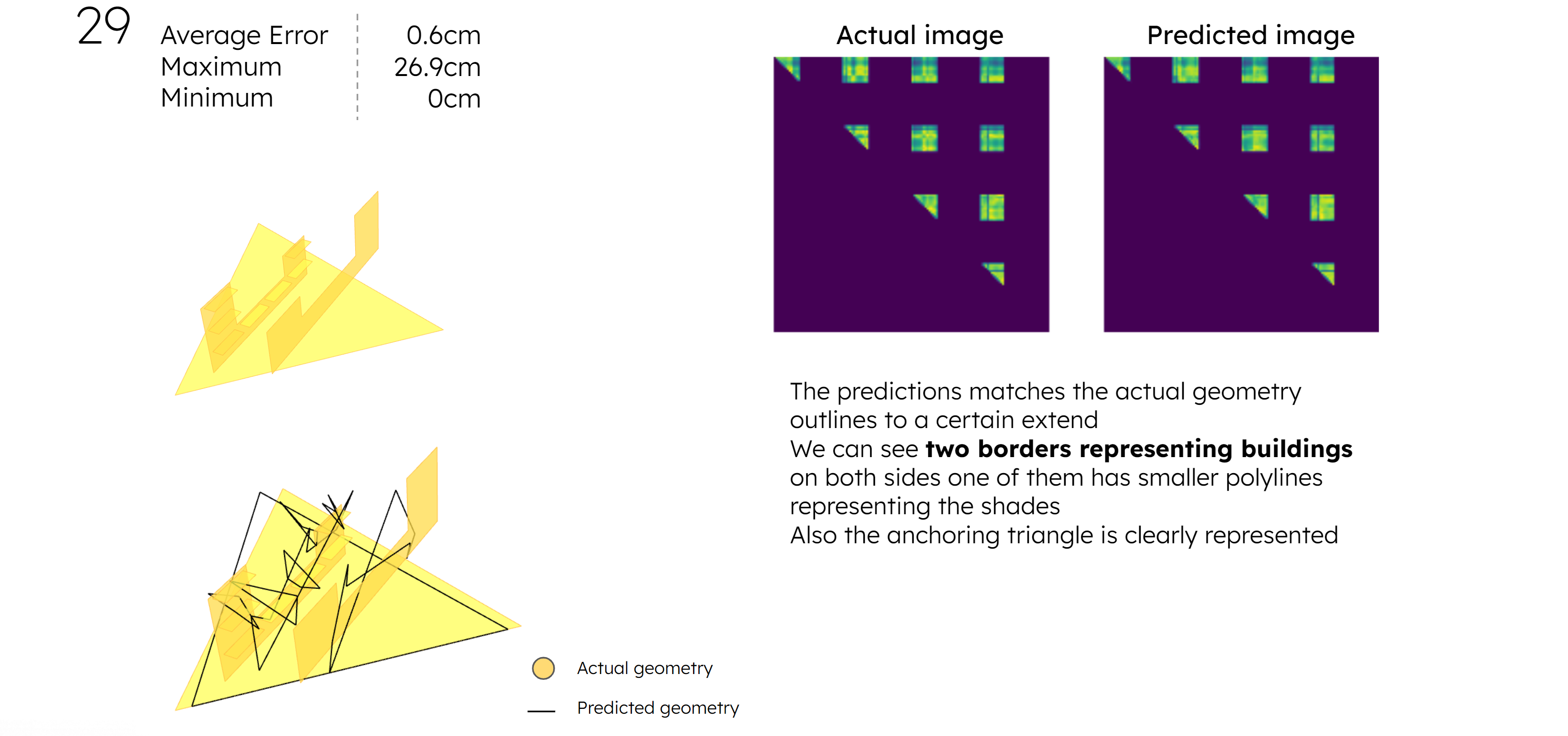

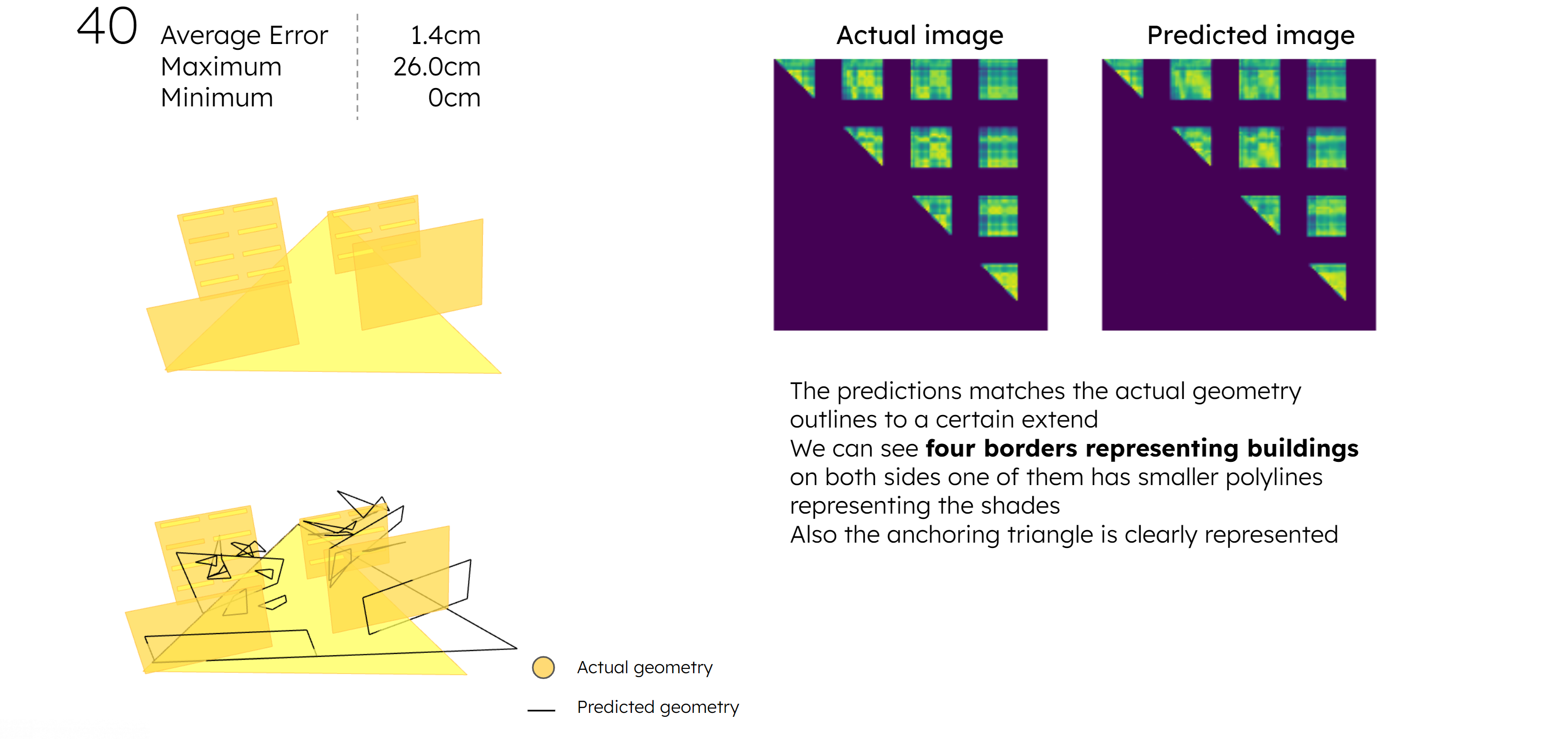

MODEL VERIFICATION AND RESULTS

CONCLUSION

Pix2Pix model did a great job predicting output images from input images. However there is some mean margin of error of 2 cm in distance map, this error can be tolerated in images and dense geometries but it has very huge impact on sparse geometry, specially when trying to reconstruct geometry from images.

FUTUREWORK

The margin of error encountered in this work flow might be overcome through more training, however this model was trained over 1.5 million steps and still not perfect. Another approach might be modifying the way the geometry is reconstructed from images to tolerate this error margin, as Kangroo plugin used in this method is so sensitive to minor variations.