WHAT_IS_G.R.A.S.S.?

G.R.A.S.S. is a hands-free design installation that lets anyone compose an urban park layout using only mid-air hand gestures and voice commands.

The goal is to make spatial design accessible to non-specialists, if you can point and grab, you can design a park. No CAD skills required.

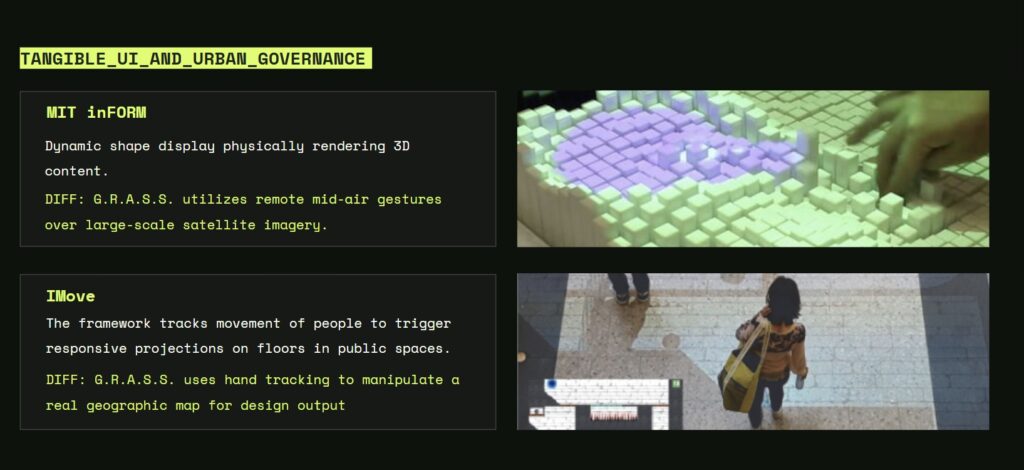

Projects like MIT inFORM physically render 3D shapes using dynamic displays, while IMove tracks people’s movement to create responsive projections in public spaces.

In comparison, our system uses mid-air hand gestures and hand tracking to interact with large-scale satellite maps, allowing users to manipulate real geographic data directly for urban design output.

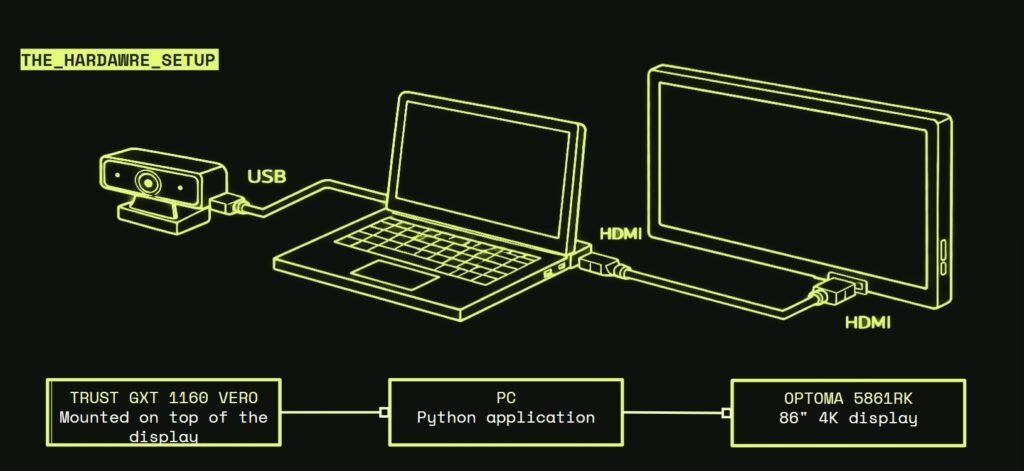

A webcam is mounted on top of the display and connected to the PC through USB to capture the user’s hand gestures. The Python application runs on the computer and processes the input in real time. The output is sent through HDMI to an 86-inch 4K display, allowing the user to interact with the large screen directly in front of the installation

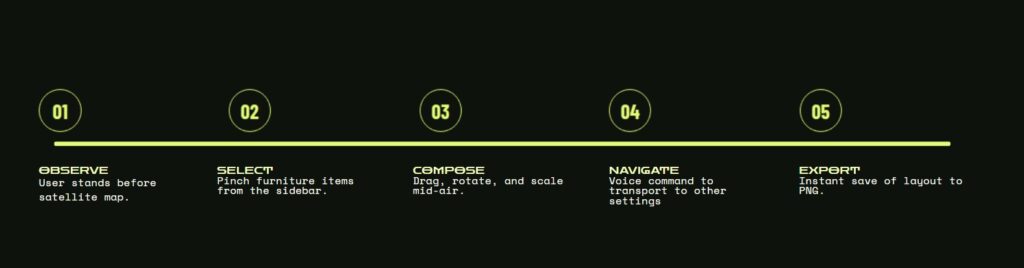

The workflow of the interaction process.

First, the user observes the satellite map. Then the user selects furniture items using a pinch gesture from the sidebar. After that, the user composes the layout by dragging, rotating, and scaling the elements in mid-air. Next, the user can navigate to other locations using voice commands. Finally, the layout can be exported instantly as a PNG file

Narrative

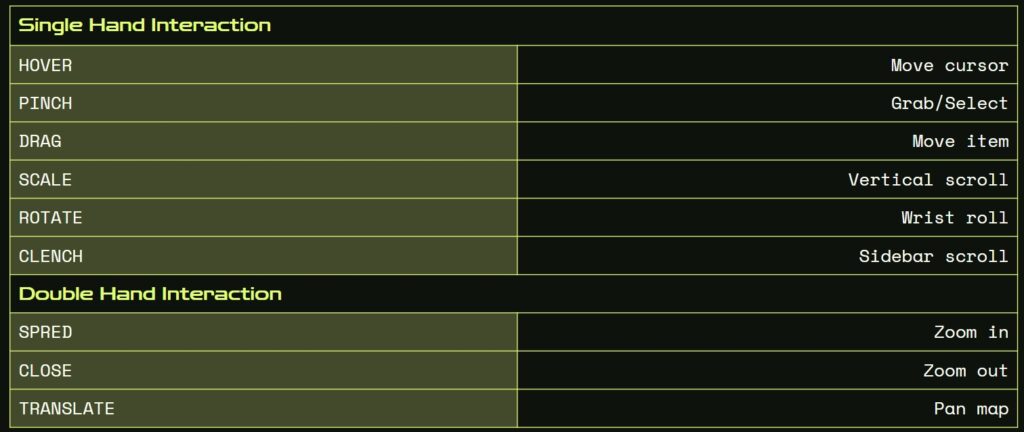

The gesture interaction system used in the project.

With single-hand interaction, the user can move the cursor by hovering, select objects with a pinch gesture, drag items, scroll vertically by scaling, rotate using wrist motion, and scroll the sidebar by clenching the hand.

With double-hand interaction, spreading the hands zooms in, closing the hands zooms out, and translating both hands allows the user to pan the map.

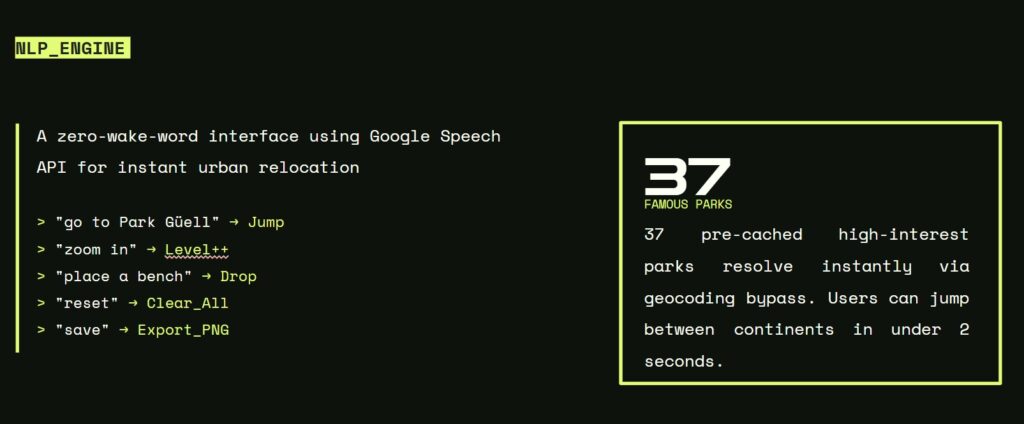

The NLP engine used in the system.

The interface uses the Google Speech API to allow voice commands without a wake word, so users can control the environment instantly. Commands such as moving to a location, zooming, placing objects, resetting, and exporting can be recognized and executed in real time.

In addition, the system includes a pre-cached database of 37 famous parks, allowing instant relocation without full geocoding, so users can jump between locations in less than two seconds.

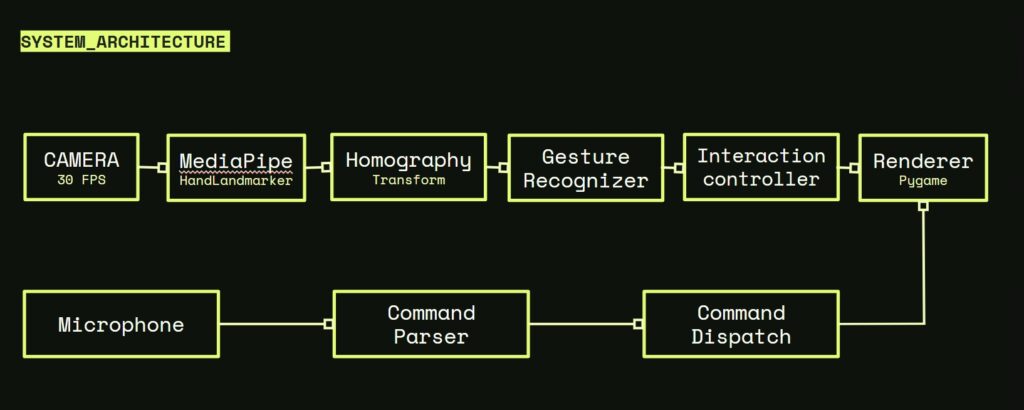

This diagram shows the system architecture of the project.

The camera captures video at 30 FPS and sends the data to MediaPipe for hand landmark detection. Then the homography transform maps the image into the working space, and the gesture recognizer identifies the user’s hand movements. After that, the interaction controller processes the input and sends it to the renderer, which visualizes the result using Pygame.

At the same time, the microphone receives voice input, which is processed by the command parser and sent through the command dispatch, allowing voice commands to control the system together with gesture interaction.

Reflection & Growth

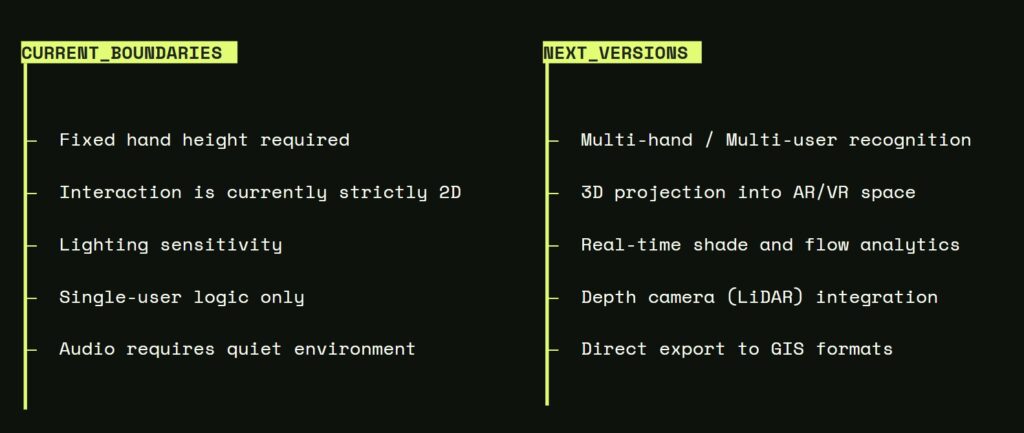

The current limitations of the system and the possible directions for future development.

At the moment, the interaction requires a fixed hand height, works only in a 2D environment, is sensitive to lighting conditions, and supports only a single user. In addition, the audio input requires a quiet environment to function properly.

In future versions, the system could support multi-user recognition, 3D projection in AR/VR space, real-time environmental analysis, LiDAR depth camera integration, and direct export to GIS formats.